Today we cover another important topic: search engine development cost, the real expenses behind creating a search engine like Google, and how to plan your own project effectively.

At Azati, we develop and deliver commercial search engines. These engines are completely different from regular ones like Google search engine, Yahoo, Bing, Baidu, and others. Many aspects of professional search engine cost estimation remain unobvious without a strong technical background.

Key Steps and Technologies in Search Engine Development

In this article, we’ll explain the essential steps to create a search engine like Google and the key factors that determine the overall development cost.

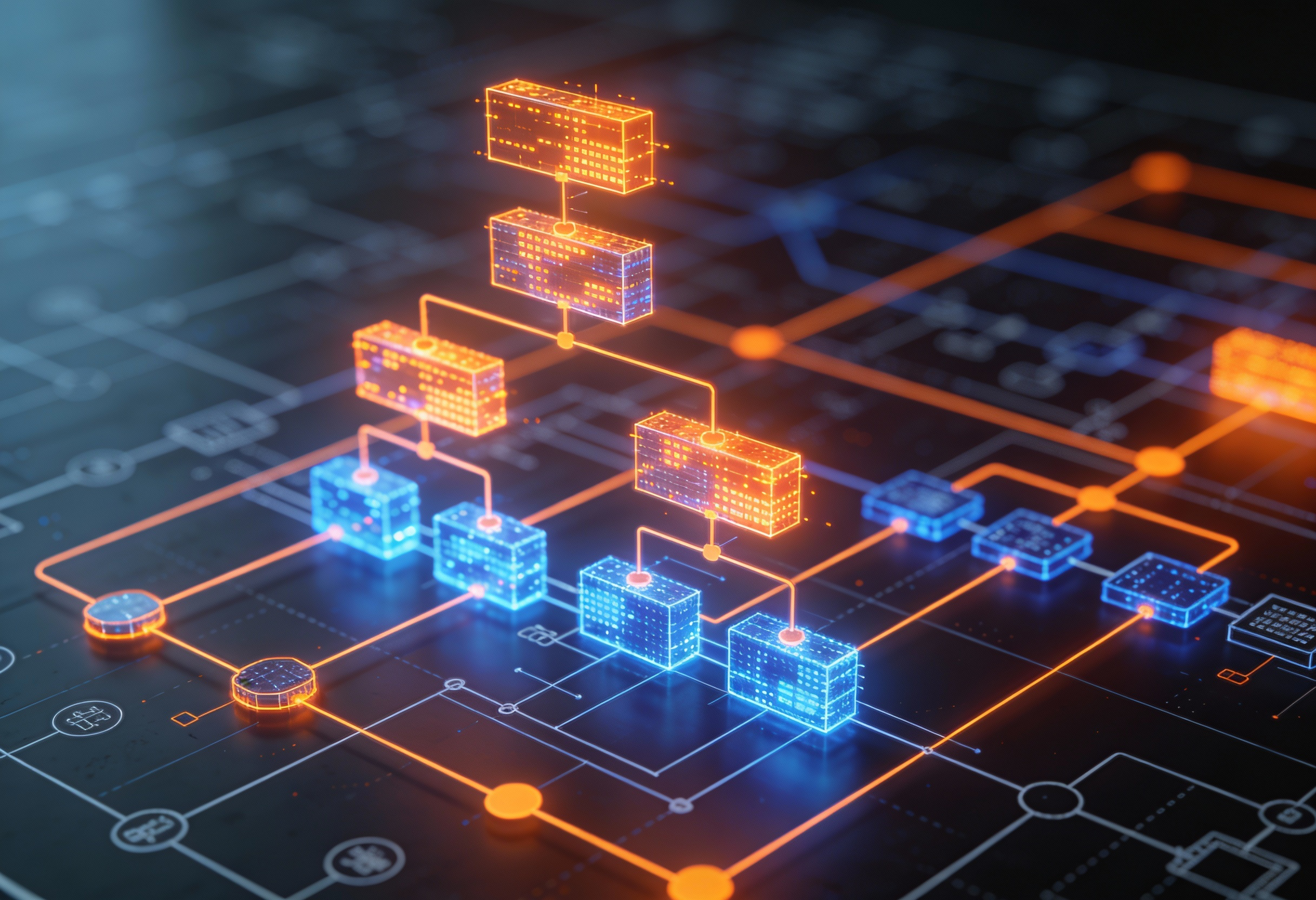

The Four Core Steps to Build a Search Engine

To find the data related to a user query, every custom search engine should:

- Determine the pattern: Identify what the user is searching for;

- Download the page from the database: Retrieve relevant documents;

- Analyze the page (in search of the pattern): Process and match content;

- Build a Search Engine Result Page (also known as SERP): Display results.

⚠️ Performance Bottlenecks:

There are two bottlenecks here, and both are related to the page size:

- It might take some time to download the page (document);

- It usually takes much time to find the pattern, if you are using the standard search approaches.

Real-World Processing Speed Examples

Typically, it requires 2 ms to process a document (HTML page) of a WordPress website (written in PHP). For instance we have about 200 pages. They are processed within 400 ms or half a second. It seems fast enough.

But now imagine that we deal with an e-book library, where millions (!) of books with hundreds of pages are stored. Surprisingly, it takes little time to process it as we do not need to download a single page from the database, we download the whole e-book at once.

The Challenge of Unprocessable Content

So, when you know this, here's another fact: there are many documents that a search engine cannot quickly process – images, videos, encrypted formats, etc.

📊 Content Processing Difficulty:

| Content Type | Processing Speed | Indexing Difficulty |

|---|---|---|

| Text/HTML | Fast (2ms/page) | Low |

| PDF Documents | Medium (5-10ms) | Medium |

| Images | Slow (requires OCR) | High |

| Videos | Very Slow | Very High |

| Encrypted Files | N/A | Cannot Process |

Lessons from Google Search Engine Development and Modern Algorithms

You might have thought: "Why can't Google search engine show us everything we want? Can i make a search engine that finds relevant information better?"

Yes, you can, but the development cost and complexity depend on your goals. Every year, the Google search algorithm becomes more accurate and advanced. Although the search quality improves, there are still many files that cannot be processed even today. Therefore, both public and commercial search engines require careful engineering of indexing algorithms, crawler technology, and database optimization to find relevant data.

Beyond the Price Tag: Factors That Influence Search Engine Development Cost

From our point of view, the search engine development cost is not the only factor customers should care about when planning a project. There is another aspect that must be considered - long-term maintenance and scalability.

Understanding Search Hub Infrastructure

For Google, search engine operations require hundreds of thousands of servers, explaining why the cost to develop a search engine like Google reaches millions. Smaller, commercial solutions can use optimized infrastructure, reducing costs dramatically.

In this way, Google processes the same data over and over again to make SERP fit the user query. It is the best and the most effective way to monitor changes, mainly if there are sextillions of pages available.

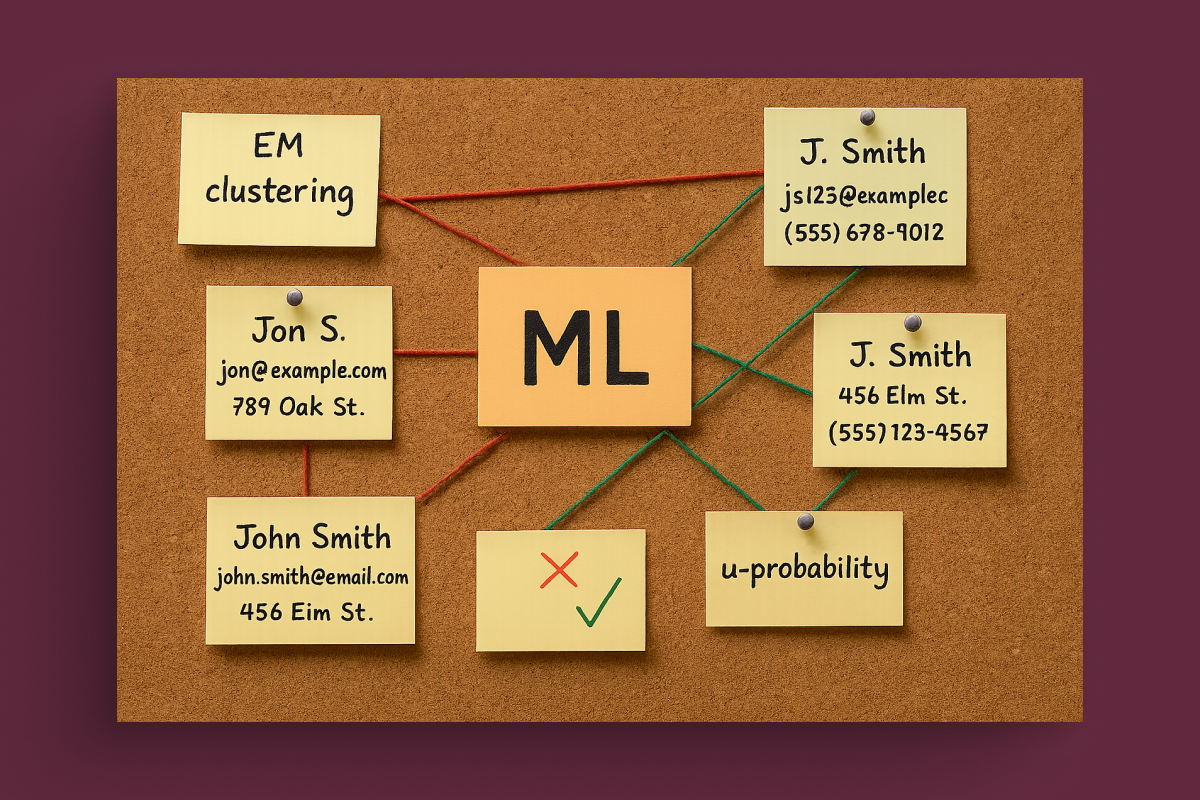

The "Footprint" Approach: How Modern Search Engines Work

Large search engine developers use complex algorithms to look for "footprints" in the document. For example, we do not need to collect all the data about the book when we can spot the key thesis in its summary.

This way, we recognize the footprint that contains the necessary data: author, titles, summary, brief description, keywords, publication data, etc., and add this footprint to a separate database.

When the user instructs Google search engine to find something, the system looks for the pattern in the footprint database first. If it doesn't find a matching answer, it performs a deep search. If this is the case, the pages are generated at a slower pace.

Cost Breakdown: How Much Does It Cost to Build a Search Engine?

Here’s what determines your final search engine development cost and how it compares to building a Google-scale solution.

Well, nobody knows the exact estimates. The only thing we know for sure – a lot.

Google is now setting up new powerful servers to process data in a quicker, more accurate and secure way. Thus, even the most complicated and in-depth queries will be performed in an instant and generate precise results.

Two Proven Approaches for Commercial Search Engines

We discovered how Google works. Now let’s see how commercial search engines process data.

We can use two approaches:

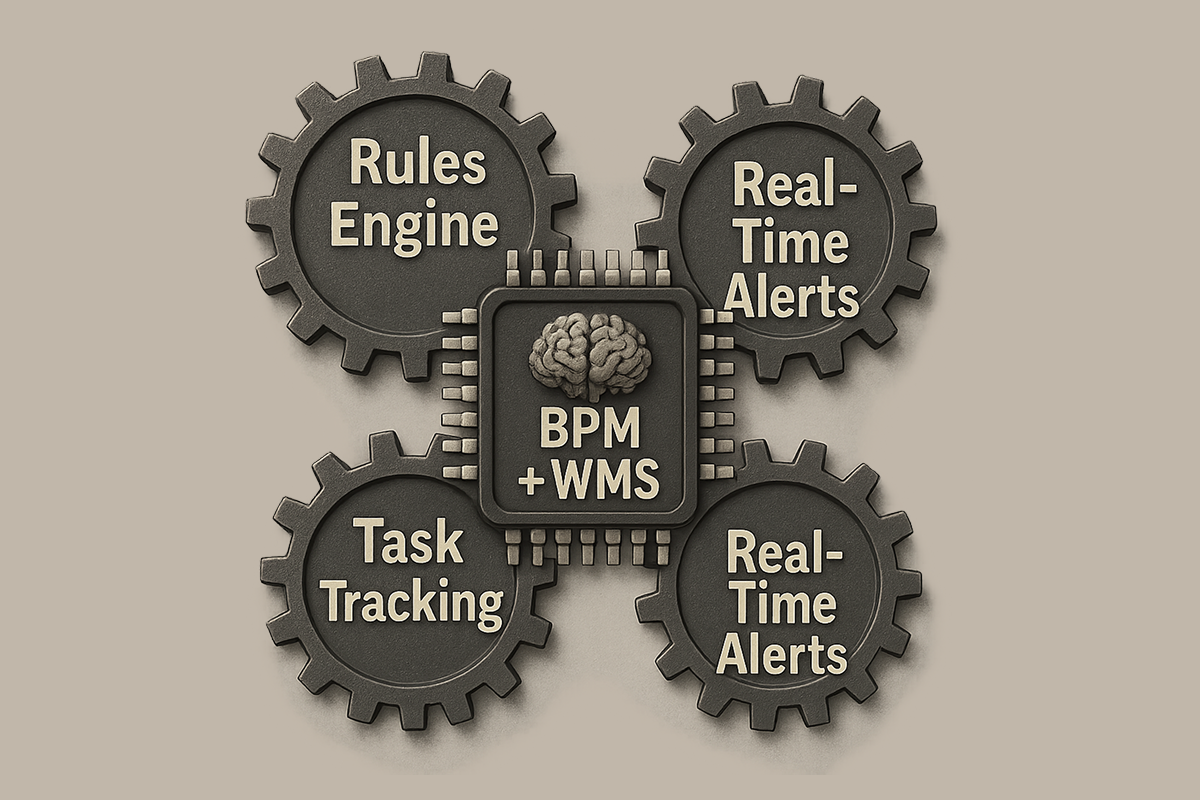

Approach 1: High-Performance Search Engine

Develop a lightning-fast search engine powered by solid mathematical knowledge, modern databases, SSD drive and coded with the fast programming language like C++

Approach 2: Footprint Database

Develop a "footprint" database for optimized pattern matching

These two approaches affect search engine development costs. Customers usually prefer the first one as it is more accurate but slightly more expensive.

Average Budgets and Price Ranges for Custom Search Engine Development

Search Engine Pricing Breakdown

Option 1: DIY - Build Your Own Search Engine

If you want to build a search engine from scratch in Python or PHP, for example, you can do it for free after completing some courses at Udemy, Mindvalley, EDX. It requires some programming skills though. In case of paid courses, it will cost you up to $100.

Best For: Learning purposes, small personal projects, developers wanting to understand search fundamentals.

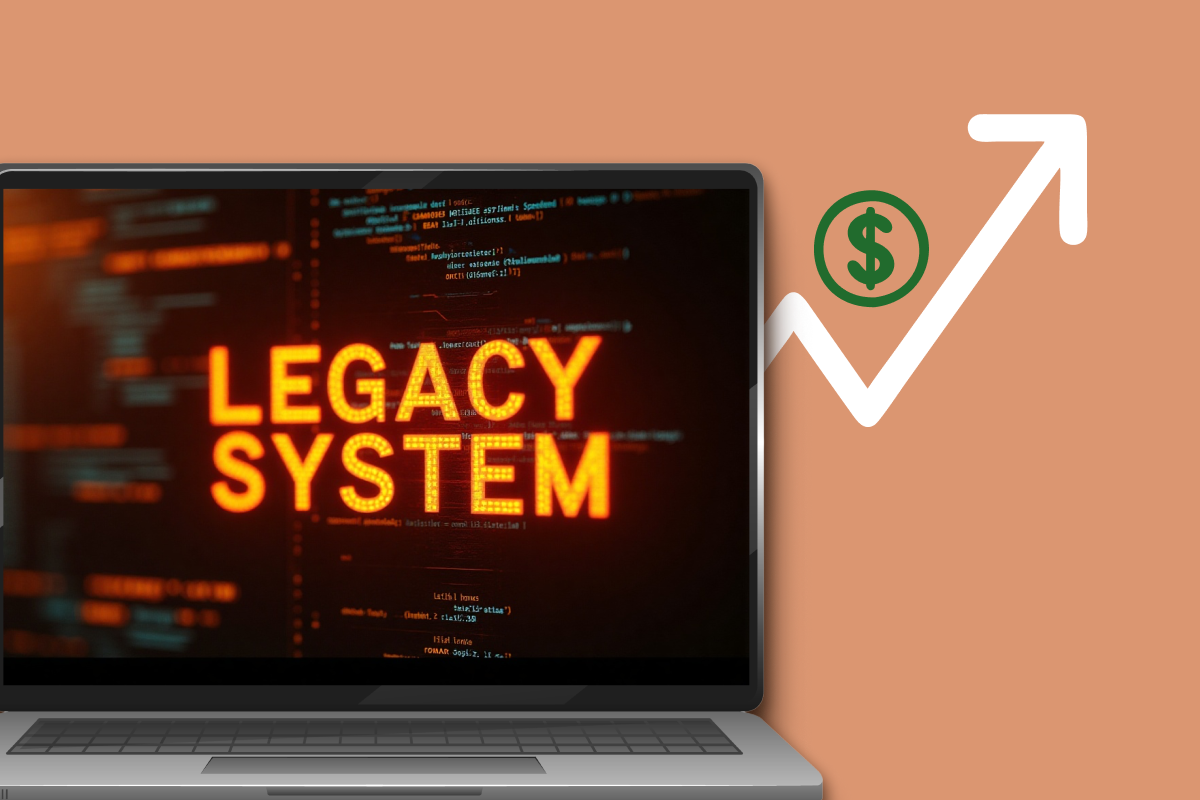

Option 2: Google-Scale Search Engine

If you want to build a search engine like Google (with a decent search quality), we would say it might cost you about $100M (for the prototype) – including costs for servers, bandwidth, colocation, electricity and so on. Maintenance costs for the existing cluster may go up to $25M per year.

Best For: Large corporations, tech giants, global search platforms.

Option 3: Commercial Business Search Engine ⭐ Most Popular

If you want to create a search engine for your business – be it the insurance, bioinformatics, healthcare, e-commerce, or other company – the search engine development costs may range from $10,000 to $60,000, with a low maintenance fee.

Best For: Medium to large businesses, e-commerce platforms, enterprise databases, specialized industries.

Search Engine Use Cases by Industry

Search Engine Use Case Examples:

- E-commerce: Product search with filters, autocomplete, and recommendations;

- Healthcare: Medical records search, drug information databases;

- Legal: Case law research, document discovery systems;

- Real Estate: Property search with multiple criteria;

- Education: Course catalogs, research paper databases;

- HR/Recruiting: Resume search, talent acquisition platforms;

- Media: Content libraries, video archives;

- Enterprise: Internal knowledge bases, document management.

Comparison: Google vs Commercial Search Engines

| Feature | Google-Scale | Commercial Business |

|---|---|---|

| Initial Cost | $100M+ | $10,000-$60,000 |

| Annual Maintenance | $25M+ | $2,000-$10,000 |

| Data Scale | Billions of pages | Thousands to millions |

| Infrastructure | Massive server farms | Cloud or dedicated servers |

| Development Time | Years | 3-6 months |

| Customization | Limited | Fully customizable |

| Best For | Global public search | Specific business needs |

Final Thoughts: Estimating Your Search Engine Development Cost

As you can see, the cost of search engine development depends on your purpose and scale.

The answer to the question "how to develop a search engine like Google" covers different nuances which fully depend on your needs, budget and the main objective: whether you want to create your own search engine or compete with global leaders.

Quick Decision Framework:

Choose DIY Learning ($0-$100) if:

- You want to learn programming;

- Building for personal projects;

- Have 3-6 months to dedicate.

Choose Commercial Development ($10K-$60K) if:

- You have specific business needs;

- Need professional support;

- Want to create a search engine for your database;

- Require customization and scalability.

Choose Enterprise/Google-Scale ($100M+) if:

- Targeting global market;

- Processing billions of documents;

- Competing with major search engines.

Need help estimating your search engine development cost? Get in touch with Azati and let's build a solution that works for your data and users.