In 2026, developers are shipping code faster than ever. According to industry research, 41% of all code written in 2025 is AI-generated. AI coding assistants like GitHub Copilot, Claude Code, and Cursor have become as ubiquitous as IDEs themselves, with 84% of developers using or planning to use AI tools in their development workflow.

This is the era of vibe coding, writing software guided by intuition, AI suggestions, and rapid iteration rather than deliberate design and rigorous review. It feels productive. It feels modern. It feels like the future.

But there's a problem: organizations are discovering that speed without security creates a hidden crisis. The technical debt is mounting. The security vulnerabilities are multiplying. And the cost of "moving fast" without robust code review best practices is becoming catastrophically expensive.

Here’s a real example of how early speed without structure turns into scaling issues:

What Is Vibe Coding? And Why It's Everywhere

Vibe coding is the practice of writing software through rapid AI-assisted iteration, generating code quickly, testing visually, and shipping when "it feels right" rather than when it passes rigorous quality gates. It's characterized by:

- Heavy reliance on AI code suggestions accepted with minimal scrutiny;

- Minimal or skipped peer review to maintain velocity;

- Focus on feature completion over code maintainability;

- Visual/functional testing instead of comprehensive test coverage;

- "Ship it and fix later" mentality enabled by continuous deployment.

It's not that developers are lazy or reckless. The tools make it easy to generate working code so quickly that traditional code review processes feel like friction. When AI can write a function in 30 seconds, spending 20 minutes reviewing it feels inefficient.

The Hidden Costs: What Vibe Coding Without Review Actually Costs

The appeal of AI-generated code is obvious: dramatically increased developer productivity. But the costs are less visible, until they're not. Let's quantify what organizations are actually paying.

1. Security Vulnerability Costs

The Problem: AI models reproduce insecure patterns from training data.

The Cost: Average data breach costs $4.45M in 2026, with 48% of AI code containing vulnerabilities.

2. Technical Debt Accumulation

The Problem: AI generates working but unmaintainable code.

The Cost: Code churn expected to double in 2026, with 7.2% decrease in delivery stability.

3. Increased Review Burden

The Problem: Reviewing AI code takes more effort than human-written code.

The Cost: Junior devs see 45% of committed code is AI-assisted but struggle with review quality.

4. Production Incident Costs

The Problem: AI misses edge cases and error handling.

The Cost: 46-68% of developers report quality issues or incorrect outputs from AI tools.

5. Knowledge Transfer Breakdown

The Problem: AI-generated code lacks context and documentation.

The Cost: New team members can't understand codebase rationale, slowing onboarding by 40-60%.

6. Quality Regression Over Time

The Problem: Without review, code quality metrics degrade continuously.

The Cost: Maintenance burden grows exponentially, not linearly.

This is exactly what we see in projects that rely fully on vibe coding without review:

The Trust Paradox: Developers Don't Trust AI, But Ship It Anyway

Here's the fascinating contradiction: research shows that only 29-46% of developers trust AI results. About 46% explicitly say they do not fully trust AI-generated code, and only 3% "highly trust" AI outputs.

Yet the same developers continue shipping that code to production, often without thorough review. Why?

- Velocity Pressure: Organizational metrics reward speed over quality;

- Review Bottlenecks: Peer code review creates queues that slow deployment;

- False Confidence: "It works in my tests" feels like validation;

- Diffused Responsibility: "The AI generated it" creates psychological distance from quality.

| Code Source | Trust Level | Review Requirement | Typical Outcome |

|---|---|---|---|

| Senior Developer | High | Light review, context check | Maintainable, well-designed |

| Junior Developer | Medium | Thorough review, mentoring | Learning opportunity, quality varies |

| AI Assistant (Reviewed) | Medium | Security scan, logic validation | Functional but needs refinement |

| AI Assistant (Unreviewed) | Low | ❌ Skipped | Hidden vulnerabilities, technical debt |

Code Review Best Practices for the AI Era

The solution isn't to abandon AI coding tools, they genuinely improve developer productivity when used properly. The solution is evolving code review best practices to match the new reality of AI-augmented development.

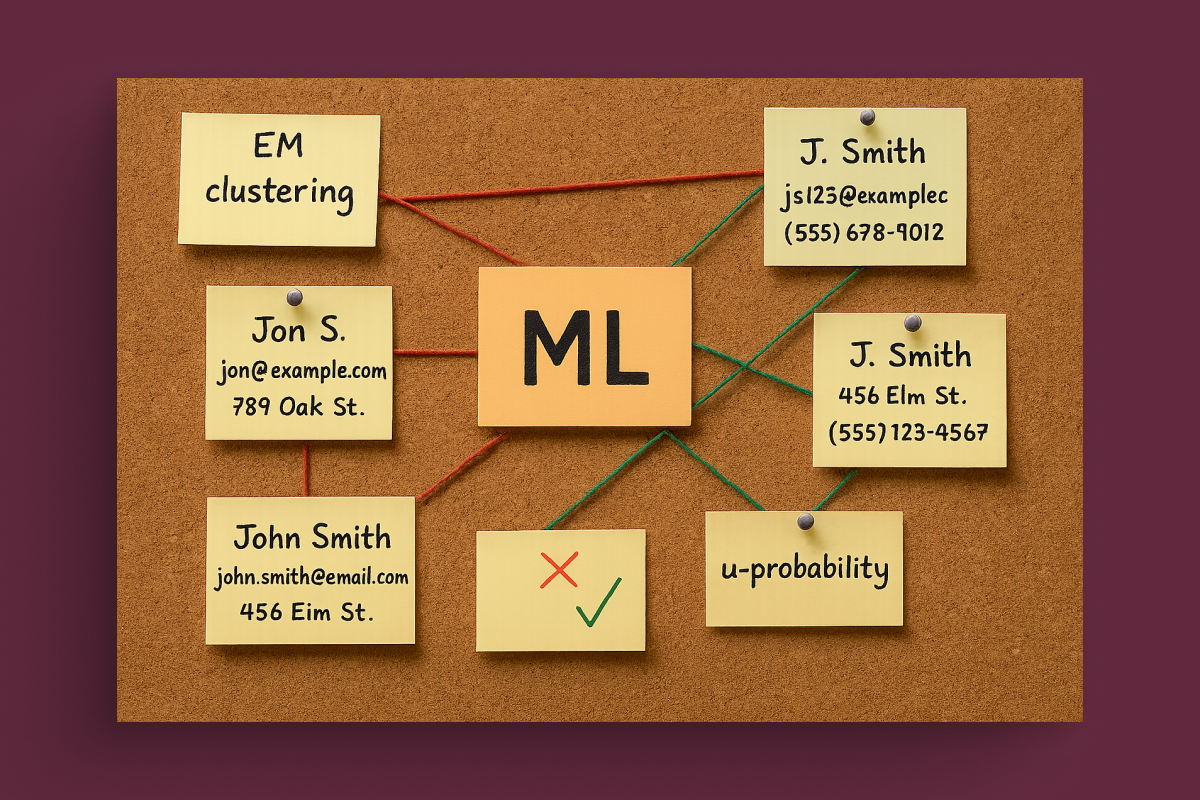

1. Distinguish AI-Generated from Human-Written Code

Modern code review tools need to identify which code came from AI suggestions versus human authorship. According to 2026 research, teams using AI-assisted code review report 35% higher quality improvements when they can distinguish code sources.

Implementation:

- Use tools that tag AI-generated code segments in pull requests;

- Require explicit developer sign-off on AI suggestions;

- Track AI acceptance rates to identify quality patterns.

2. Implement AI-Specific Review Checklists

AI code requires different scrutiny than human code. A comprehensive code review checklist for AI-generated code should include:

| Review Category | What to Check | Why It Matters |

|---|---|---|

| Security Validation | Authentication, authorization, input validation, data exposure | 48% of AI code has vulnerabilities |

| Edge Case Handling | Null checks, boundary conditions, error handling | AI often misses uncommon scenarios |

| Performance Impact | Algorithm efficiency, database queries, memory usage | AI optimizes for correctness, not performance |

| Code Maintainability | Naming, structure, comments, documentation | AI generates verbose, unclear code |

| Test Coverage | Unit tests, integration tests, edge cases | AI-generated tests often superficial |

3. Mandate Human-in-the-Loop for Critical Paths

Not all code requires equal scrutiny. Implement tiered review based on risk:

- Critical (Security, Payment, Auth): Mandatory senior developer review, security scan, manual testing;

- High (User-Facing Features): Peer review, automated testing, QA validation;

- Medium (Internal Tools): Automated checks, spot-check review;

- Low (Documentation, Config): Automated validation only.

4. Use Automated Code Quality Gates

Modern code review automation catches issues humans miss:

- Static Analysis: Security vulnerabilities, code smells, complexity metrics;

- Dynamic Analysis: Runtime behavior, performance profiling, memory leaks;

- Test Coverage: Enforce minimum coverage thresholds before merge;

- Dependency Scanning: Vulnerable libraries, license compliance.

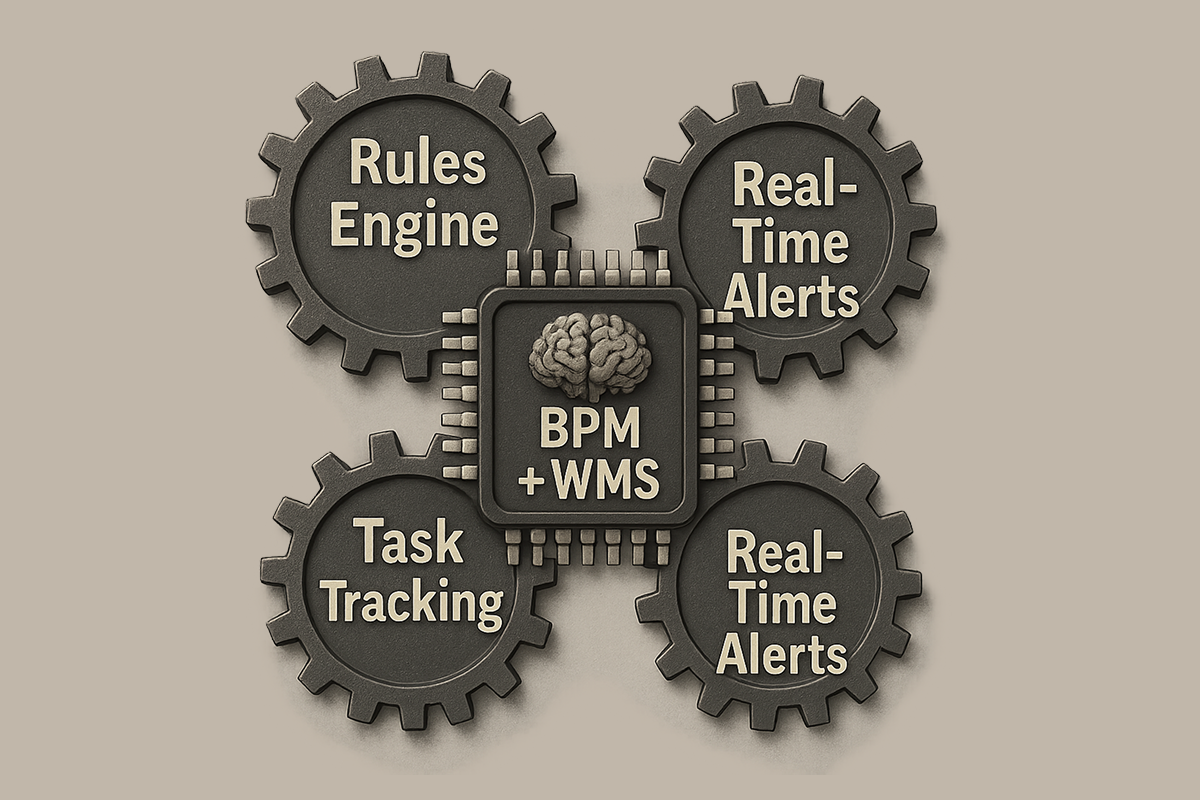

Azati's Proven Code Review Framework for AI-Augmented Development

- Pre-Commit Validation: Automated security scans and quality checks before code even enters review;

- AI-Aware Review Tools: Distinguish and flag AI-generated code for appropriate scrutiny;

- Risk-Based Review Tiers: Match review intensity to code criticality and business impact;

- Continuous Quality Monitoring: Track code quality metrics to identify degradation early;

- Developer Education: Train teams on AI code patterns and common failure modes;

- Governance Integration: Embed review requirements in development workflow, not as separate gates.

The Real Cost of Skipping Code Review: A Case Study

Consider a mid-size SaaS company that embraced AI coding tools aggressively in early 2025:

6-Month Impact: Speed vs. Quality

Initial Results (Months 1-3):

- Feature velocity increased 55%;

- Pull request volume doubled;

- Team morale high, developers felt productive.

Reality Check (Months 4-6):

- Production incidents increased 180%;

- Customer-reported bugs up 220%;

- Engineering time spent on bug fixes increased from 20% to 65%;

- Two security vulnerabilities exploited, requiring emergency patches;

- Net feature delivery velocity decreased compared to pre-AI baseline.

What went wrong? The team had reduced peer code review to maintain velocity, accepting AI suggestions with minimal scrutiny. The technical debt accumulated invisibly until it manifested as operational crisis.

The fix: Implementing Azati's AI-aware code review framework reduced incidents by 70% within 60 days while maintaining 40% higher velocity than pre-AI baseline.

Software Quality Assurance in the Age of AI

Software quality assurance must evolve to address AI-generated code's unique characteristics. Traditional QA focuses on testing functionality. Modern QA for AI-augmented development adds layers:

AI-Specific Quality Dimensions

- Correctness:Does the code do what it's supposed to? (Traditional QA);

- Security: Does it introduce vulnerabilities? (AI-specific concern);

- Maintainability: Can humans understand and modify it? (AI often fails here);

- Performance: Is it efficient at scale? (AI optimizes for working, not optimal);

- Reliability: Does it handle edge cases? (AI misses uncommon scenarios).

Technical Debt Management: The Compound Interest Problem

Technical debt management becomes exponentially more critical with AI-generated code. Here's why:

- AI debt compounds faster: Unmaintainable code makes future AI suggestions worse (AI learns from existing code);

- Visibility problem: Debt isn't obvious until refactoring attempts fail;

- Knowledge loss: Nobody understands why AI-generated code works a certain way;

- Refactoring risk: Changing AI code without understanding original intent breaks functionality.

| Debt Type | Cause | Compound Effect | Mitigation |

|---|---|---|---|

| Security Debt | Unreviewed AI code with vulnerabilities | Exploits multiply as more code builds on flawed patterns | Mandatory security review + automated scanning |

| Architecture Debt | AI suggests quick fixes vs. proper design | System becomes rigid, changes break unexpectedly | Senior review of architectural changes |

| Documentation Debt | AI generates code without context explanation | Future developers can't maintain or extend code | Require documentation for all AI-generated code |

| Test Debt | Superficial AI-generated tests | False confidence, bugs slip to production | Human-written tests for critical paths |

How Azati Helps Organizations Build Quality at AI Speed

At Azati, we've spent 22 years building software systems that balance innovation velocity with engineering excellence. The AI coding revolution doesn't change the fundamentals, it amplifies them.

Our approach combines AI coding assistants with mature code review processes to capture productivity gains without accumulating destructive technical debt.

Azati's Quality Assurance for AI-Augmented Development

- 22 Years of Engineering Excellence: Proven frameworks that work at scale across 400+ projects;

- AI-Aware Code Review: Specialized processes for reviewing and validating AI-generated code;

- Automated Quality Gates: Security scanning, complexity analysis, coverage enforcement before merge;

- Senior Developer Oversight: Experienced engineers review architectural decisions and critical code paths;

- Continuous Quality Monitoring: Track code quality metrics to catch degradation early;

- Developer Training: Teach teams to use AI effectively while maintaining quality standards;

Real Results: Quality + Velocity

Organizations partnering with Azati for AI-augmented development see:

- Productivity gains sustained: 40-50% velocity improvement maintained long-term;

- Quality maintained or improved: Production incidents decrease despite faster shipping;

- Technical debt controlled: Code maintainability scores improve over time;

- Security vulnerabilities caught early: 95% of issues found before production;

- Developer satisfaction higher: Teams ship confidently without fear of production failures.

Conclusion: Speed Without Sacrifice

The hidden cost of vibe coding without code review isn't immediately visible. It manifests months later as security incidents, production failures, and maintenance crises. By then, the technical debt has compounded to catastrophic levels.

AI-generated code is powerful. Research shows that 91% of engineering organizations have adopted at least one AI coding tool, and the productivity gains are real. But productivity without quality is just expensive waste.

The solution isn't to abandon AI coding assistants, it's to evolve code review best practices to match the new reality. Organizations that implement AI-aware review processes, automated quality gates, and proper governance capture the velocity gains while avoiding the hidden costs.

At Azati, we help organizations navigate this balance, building AI-fast without breaking production. Because in 2026, the competitive advantage isn't just moving fast. It's moving fast sustainably.