Strong pilots, weak production transitions - are AI models to blame?

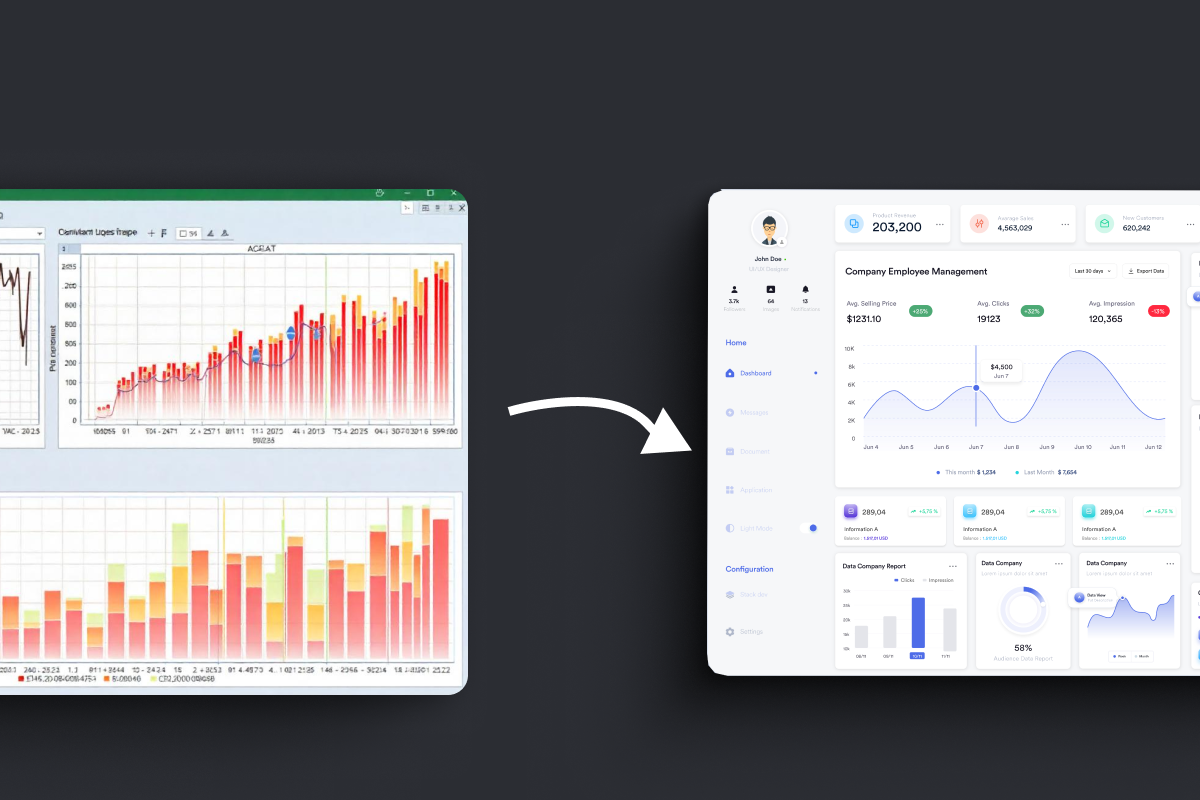

In one of our early enterprise deployments, we reviewed a claims AI system we had built 6 months after go-live. The pilot had been flawless. The reality was not: accuracy was down from 91% to 64%, and the cost per document had tripled. The compliance team had quietly added a parallel manual process "just in case."

Nobody had noticed. We learned a hard lesson that day: the models hadn't failed, but the environment around them had.

This is not a rare story.

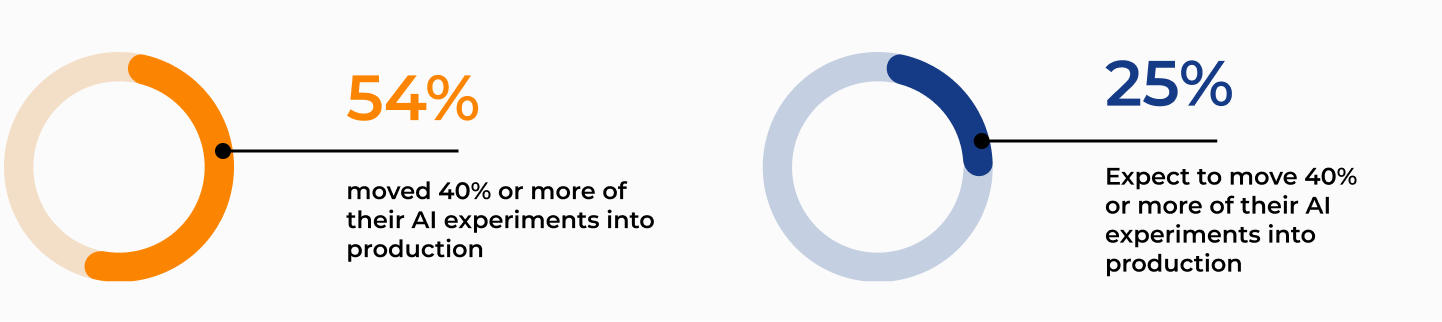

According to Deloitte's State of AI in the Enterprise, only 25% of companies have moved 40% or more of their AI experiments into production — yet over half expect to reach that level within months. That gap is not optimism. It's a recurring pattern I've watched play out across regulated financial and insurance environments: strong pilots, weak production transitions.

Here are the five technical reasons it happens when moving GenAI from sandbox to scale.

1. The pilot data doesn't match production reality.

Pilots run on neatly parsed, single-issue PDFs prepared by someone who understands the use case. Production intake is a mess: 50-page forwarded email threads, low-resolution scans with handwritten notes, and unstructured EDI feeds. When you feed this into an LLM, the context window overflows, the RAG (Retrieval-Augmented Generation) pipeline grabs the wrong paragraphs, and the AI loses the plot. This isn't a model failure — it's a document parsing and data pipeline problem that teams ignore until go-live.

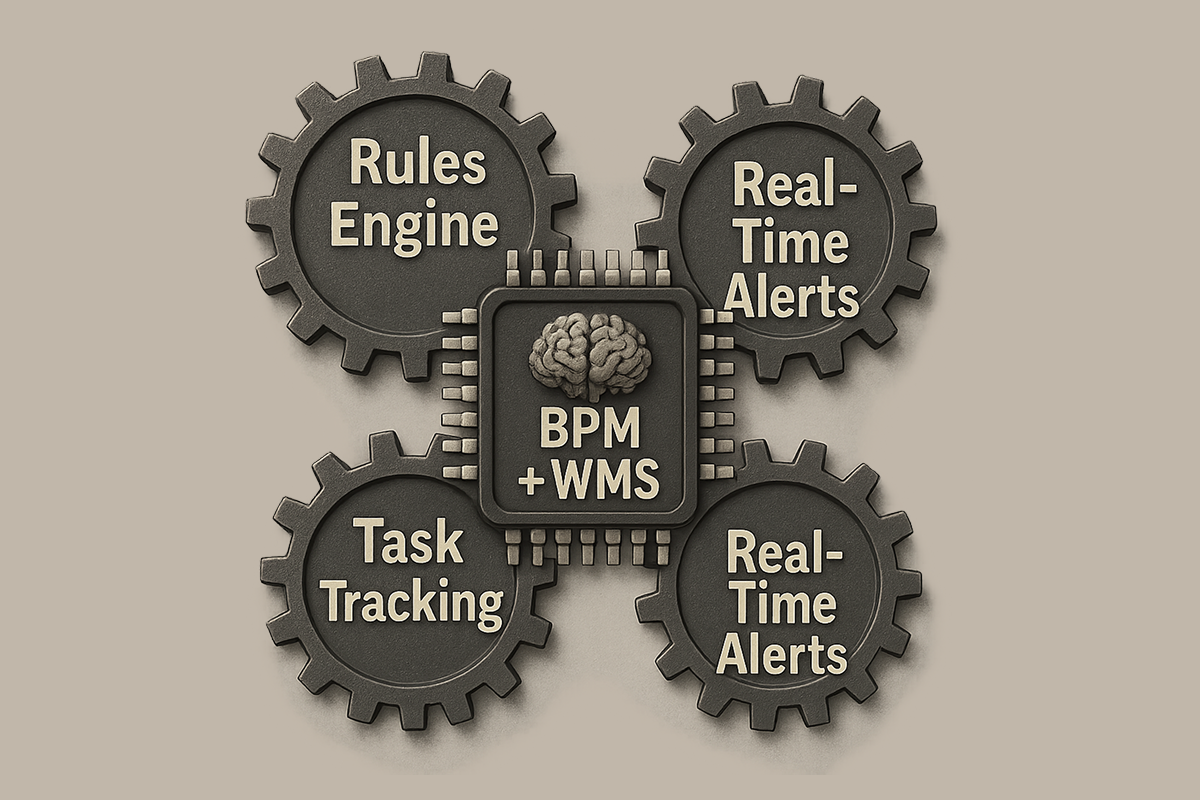

2. There's no orchestration layer for the AI.

A GenAI pilot typically operates in an isolated environment — a chat UI or a script returning results to a spreadsheet. A production system needs the AI to act: updating a live core claims platform, pulling history from a DMS, or logging to a compliance registry. None of these legacy systems were built for LLM "tool calling" or API-first integration. In one banking deployment we ran, connecting legacy platforms required RPA bridges because direct APIs simply didn't exist. Skipping orchestration design means spending months just on integration.

3. Missing guardrails for hallucinations.

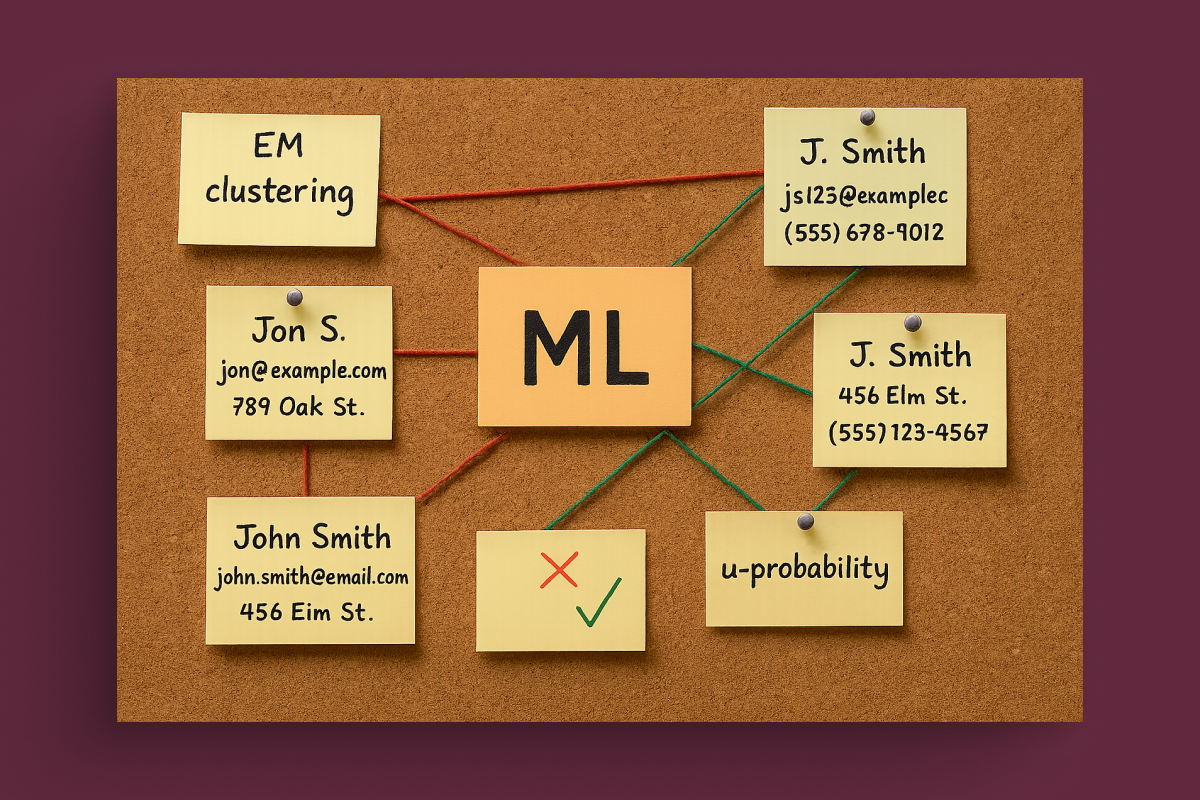

LLMs don't natively provide reliable confidence scores — they can hallucinate a policy exclusion with 100% certainty. If your workflow treats LLM output as absolute truth, you are building a compliance bomb. Production requires strict guardrails, automated verification steps (like cross-checking extracted entities against the source text), and a seamless human-in-the-loop routing layer for edge cases.

4. LLM Observability is treated as basic logging.

A pilot logs enough to see if the code ran. A production claims system in a regulated environment requires full LLM observability and execution tracing. You need an immutable, explorable record: what exact prompt was constructed, which specific document chunks the RAG system retrieved, the model's intermediate reasoning, and the raw output. If a compliance officer asks why an AI denied a claim and you only have standard server logs instead of full context traces, they will mandate a parallel manual process, defeating the automation entirely.

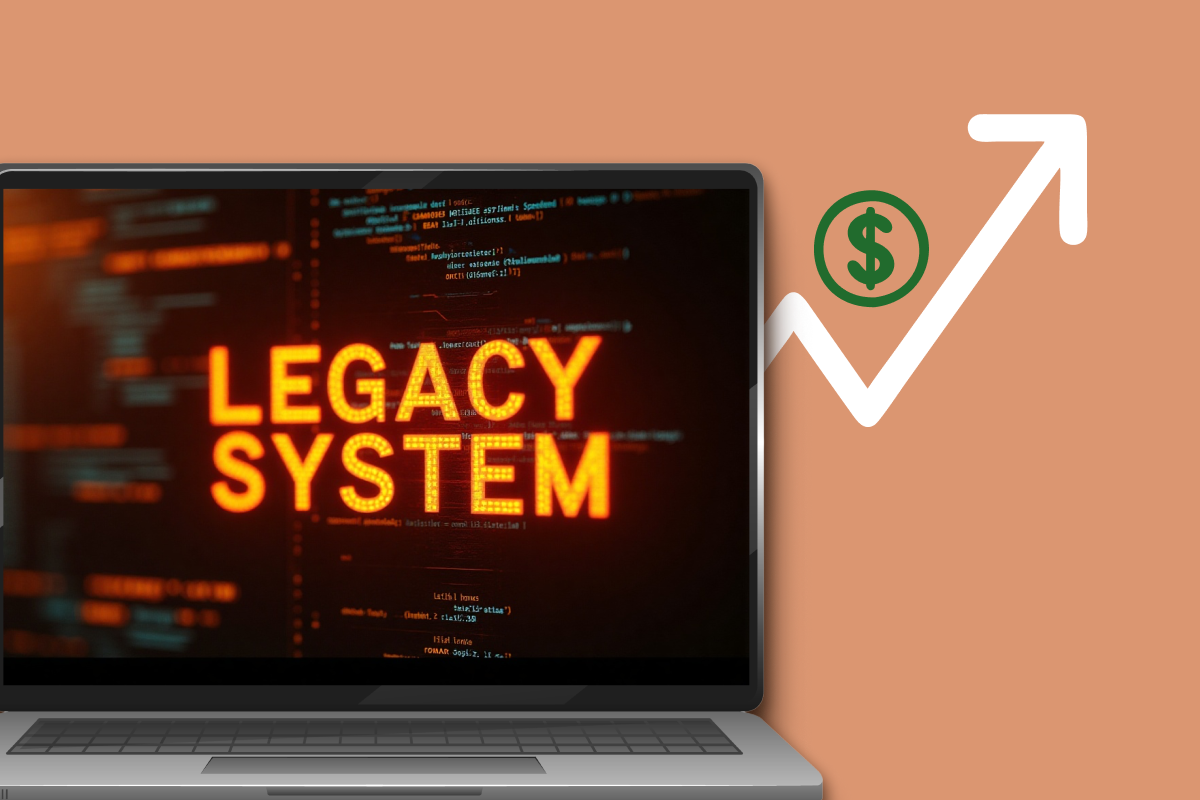

5. Nobody owns system degradation and Evals.

GenAI systems degrade over time. API providers quietly update their models causing "prompt drift", and your vector databases get polluted with outdated policy guidelines. To maintain production stability, continuous evaluation (Evals) must become your system's immune system. Running automated tests against "golden datasets" catches these regressions before they impact live claims. Without Evals and dedicated LLMOps ownership, accuracy drops silently. The AI doesn't break; the context around it does.

The honest observation after multiple production deployments: most of these failures are not AI failures. The foundation models work. What fails is the engineering discipline around them.

To survive in production, an AI pilot needs to evolve into an industrial pipeline: robust document ingestion, seamless API orchestration, strict hallucination guardrails, full execution observability, and continuous Evals.

A claims AI system is closer to operational infrastructure than to a software project, and it needs to be treated that way from day one.

I am open to discuss AI in regulated operations, industrial AI, and the gap between what AI promises and what it actually delivers in production. Fill out the form below to start a conversation.