The conversation about AI in insurance claims has two distinct phases. The first phase is the business case – and that conversation is largely over. Faster processing, lower cost per claim, higher straight-through rates, better SLA compliance. Every operations leader at a mid-to-large insurer has seen the numbers.

The second phase is harder: getting from that business case to a system that actually runs in production, processes real documents from real partners, and survives compliance review at a DNB-supervised institution. That conversation is where most projects stall – not because the technology fails, but because the deployment wasn't designed for the operational and regulatory environment it needs to operate in.

This article covers both sides: what the operational transformation actually looks like when claims AI is working correctly, and what compliance teams at regulated European insurers need to see before they'll approve go-live.

What the current state actually costs

Operations leaders know the direct costs of claims backlogs – SLA breach penalties, overtime, customer churn. These numbers are calculable and they're the ones that drive the AI business case.

The less-discussed cost is the cost of fixing it incorrectly: deploying AI that compliance won't approve, or that works for three months and then quietly degrades because nobody owns it operationally.

A typical manual claims processing workflow at a European insurer looks like this: a document arrives – via email, portal upload, or still occasionally fax-to-PDF – and a case officer manually opens it, identifies the document type, extracts the relevant fields, cross-references data across two or three separate systems, and routes it to the appropriate queue. If anything is missing or inconsistent, it goes to an exception queue. Exception queues are where SLA commitments go to die.

Average end-to-end time on standard cases: two to three business days. Exception handling adds more. Manual review cost per document scales linearly with volume – when claims volume grows 20–30%, the options are to hire more people or let SLAs slip. Usually both happen. The audit trail is whatever the case officer remembered to log.

This is the baseline. It's expensive, it doesn't scale, and it creates audit exposure that becomes harder to manage as regulatory requirements increase.

What changes with a properly deployed AI layer

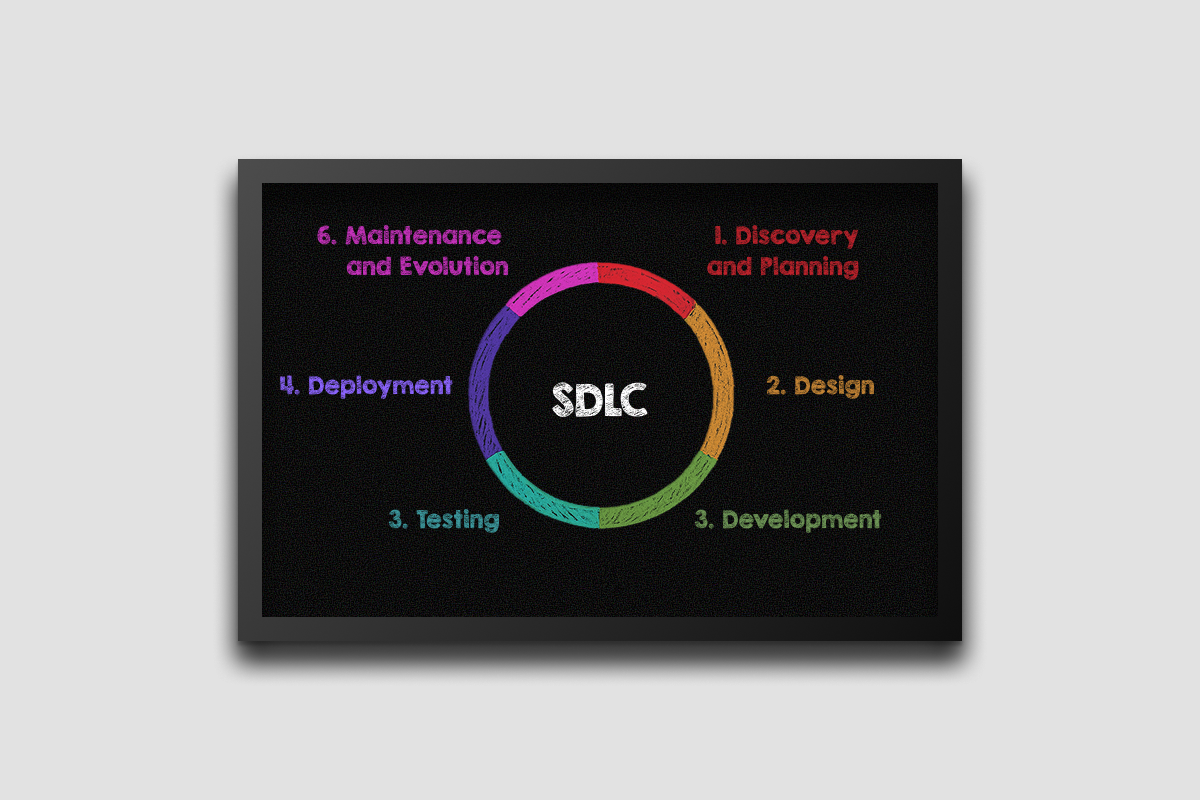

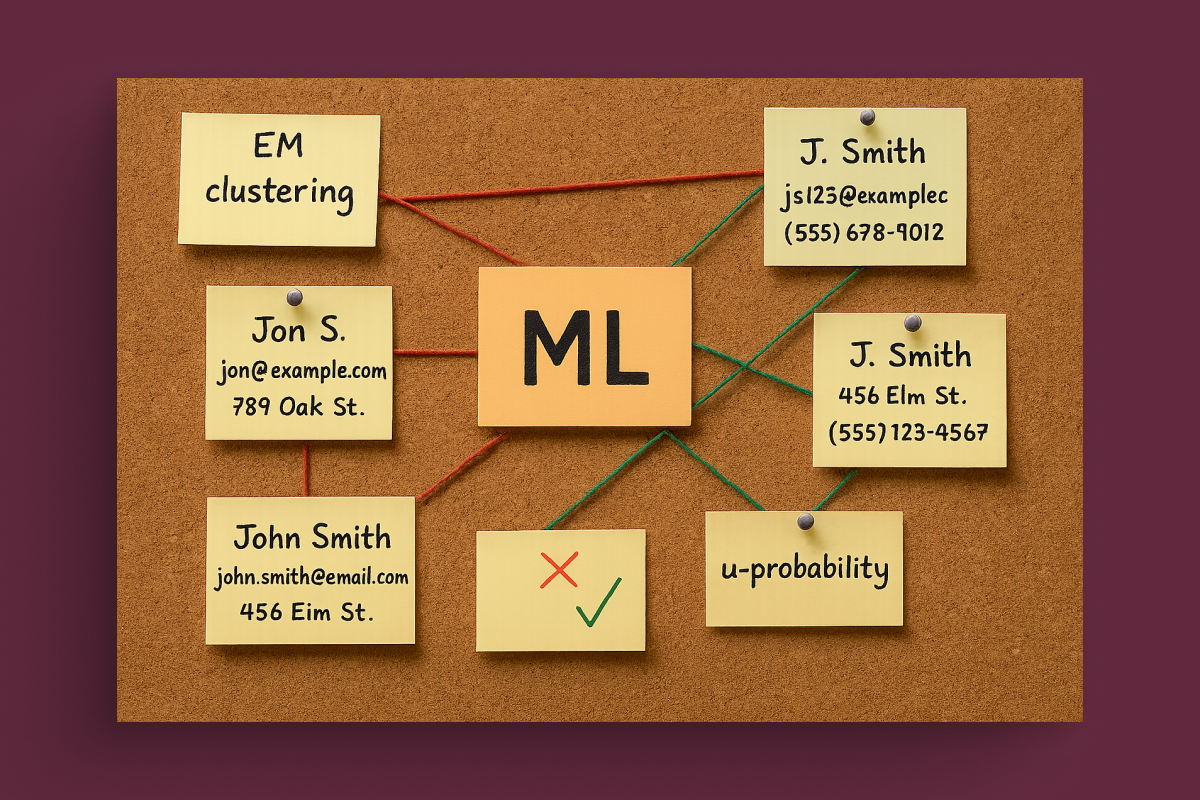

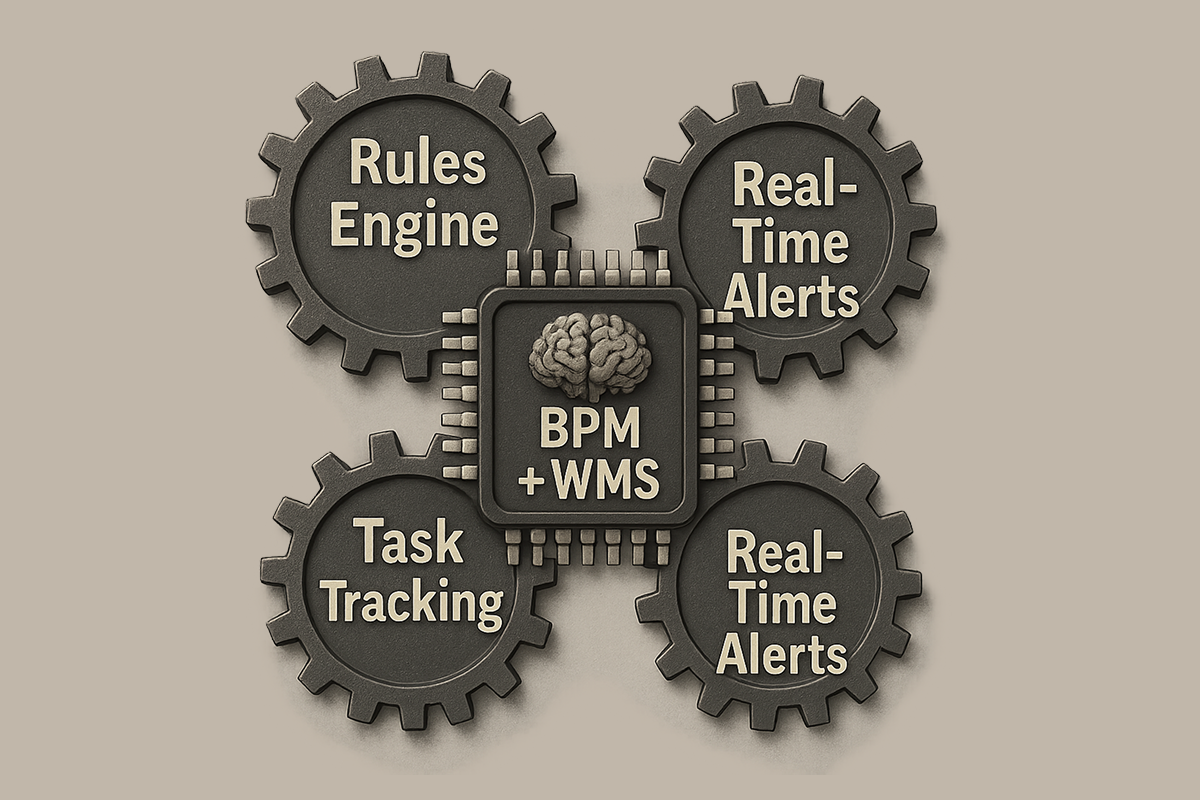

The operational transformation is best understood through the routing logic that sits at the centre of a production claims AI system.

A document arrives via any intake channel. An ingestion pipeline normalises the format – PDF, TIFF, DOCX, EDI – regardless of source. The AI layer classifies the document type, extracts structured fields, validates against master data, and generates a routing decision based on confidence score.

- High confidence – straight-through processing. The claim moves forward automatically. No human involvement. A full audit log is generated at every processing step.

- Medium confidence – human review queue, pre-populated. The reviewer sees a structured summary: extracted fields, flagged discrepancies, confidence scores per field, and an AI-generated assessment. They are making a judgment call on an edge case – not doing data entry on a standard document. The difference in reviewer productivity is significant.

- Low confidence or exception trigger – escalation with full context assembled. The escalation handler receives everything needed to make a decision immediately, rather than starting from a raw document stack.

From a deployment we operate for an insurance group processing over 40,000 documents per month: 85% of documents are processed without any human involvement. End-to-end processing time dropped from two to three business days to under 90 seconds. Cost per processed document reduced by 52% within six months of go-live. Volume grew 30% during this period – headcount did not. Zero changes were made to the existing SAP or DMS infrastructure. Learn more

These outcomes are not exceptional. They are the expected outcome of a correctly designed and operated system. The reason they're not universal is that most deployments fail before reaching this operational state – for reasons that have nothing to do with the AI itself.

The operational requirements that most pilots miss

Three operational design decisions determine whether a claims AI deployment reaches these outcomes or stalls at pilot stage. We covered this earlier, but from a more technical perspective.

Intake normalization before AI processing

Production claims environments receive documents from dozens of external partners in formats that no training dataset fully captures. A production ingestion layer that normalises all input before it reaches the classification and extraction layer is not optional – it's what separates a system that works on test data from one that works on Monday morning's actual intake. Partners change their document layouts. New document types arrive from newly onboarded clients. The normalisation layer absorbs this variability so the AI layer doesn't have to.

Confidence-tiered routing that is enforced by architecture, not policy

The three-zone routing described above needs to be a system-level constraint, not a workflow guideline. Routing thresholds need to be configurable by product line, claim type, and risk appetite – so that the business can adjust automation boundaries without engineering changes. In an underwriting decision assistant we built for a large insurer, configurable thresholds across green, yellow, and orange decision zones allowed the business to tune the automation boundary as confidence in the system grew. Straight-through processing increased 45%, and application processing capacity grew 2.5 times.

MLOps ownership from day one

A claims AI system that nobody monitors is a system that degrades. Models drift as document formats change, as partner layouts update, as regulatory requirements evolve. Cost per document increases as API call patterns shift without active optimisation. Accuracy decreases silently until case officers start flagging problems. Operational ownership – drift monitoring, retraining cycles, cost tracking, monthly performance review – needs to be scoped and assigned before go-live, not treated as a post-launch consideration.

What compliance teams actually need to approve it

This is where the majority of claims AI deployments stall – not in operations, but in legal and compliance review. The compliance gate is not an obstacle to route around. It is a legitimate set of requirements that, if designed for from the start, becomes straightforward to satisfy.

In regulated insurance environments across the EU, four things determine whether compliance approves or blocks a claims AI deployment.

An immutable audit trail per document – structured and retrievable by claim ID

Every automated action needs a logged record: data consumed, model version, confidence score, routing decision, reviewer identity and timestamp where applicable. This needs to be structured and exportable for regulatory review – not a database table that requires a developer to query, but a per-document record that compliance can retrieve by claim ID during an audit. The difference between a compliant audit architecture and an insufficient one is not the volume of data logged – it's the structure and accessibility of that data.

In two insurance deployments we operate, the audit layer was built as core infrastructure from day one. Both have subsequently passed regulatory reviews with zero findings related to the AI processing layer.

GDPR-compliant data handling with explicit answers on residency and retention.

For claims documents containing medical information, financial data, and personal identifiers classified as sensitive under GDPR Article 9, the question of where data is processed is not administrative. Dutch insurers operating under DNB supervision are increasingly finding that a data processing agreement with a US-based SaaS vendor is insufficient – not because the DPA is invalid, but because the residual risk of processing sensitive personal data outside EU jurisdiction is one that compliance and legal teams no longer want to carry. EU-hosted or on-premises deployment closes this question at the architecture level.

Defined human approval points enforced by system architecture, not workflow policy

Compliance teams need to be able to demonstrate to regulators that AI is not making autonomous decisions on regulated outcomes. This requires showing clearly – in system design documentation, not just process maps – which decisions the AI can make, which require human sign-off, and how that sign-off is recorded and linked to the audit trail. Routing thresholds need to be visible and configurable. Override rates need to be logged and reviewable. This is not a documentation exercise – it requires architectural decisions that are difficult to retrofit after deployment.

Explainability on demand for any individual decision. When a compliance officer or external regulator asks why a specific claim was routed to exception, or why a particular field was flagged, the system needs to be able to answer in terms that are evaluable by a non-technical reviewer. Confidence scores from a black-box model are not sufficient. The explanation layer needs to show which input features drove the routing decision and why – in language that a compliance officer can include in a regulatory response.

The pattern among insurers who move fastest through compliance review is consistent: they treated compliance requirements as architectural constraints from the start of the project, not as a gate at the end. The time and cost of satisfying these requirements when designed in from the beginning is a fraction of what it costs to retrofit a deployed system.

The EU regulatory timeline that makes this urgent

Two upcoming regulatory developments make the architectural decisions above more pressing than they might appear on a standard project timeline.

The EU AI Act's high-risk provisions take effect in August 2026 – fourteen months away. Insurance claims automation and underwriting decision support are likely to be classified as high-risk AI systems. High-risk classification requires conformity assessments, technical documentation, human oversight mechanisms, and ongoing accuracy monitoring. Insurers who deploy claims AI now without building this infrastructure will face a mandatory retrofit under regulatory pressure in a compressed timeframe. Building it in from the start is significantly less expensive than building it under deadline.

DORA – the Digital Operational Resilience Act – adds a further consideration for insurers with financial services classification. Under DORA, a cloud AI vendor processing critical operational data becomes a critical ICT third-party provider, triggering concentration risk assessments, contractual requirements, audit rights, and exit strategy documentation that most SaaS vendors are not structured to accommodate. On-premises or dedicated EU infrastructure eliminates this third-party ICT dependency.

The practical starting point

The gap between a claims AI pilot and a production system is not primarily a technology gap. The technology is available, the approaches are proven, and the outcomes are predictable. The gap is in engineering discipline, operational design, and compliance architecture – and it closes fastest when these are treated as first-order requirements from the beginning of the project, not as constraints to address after the model is built.

For insurers evaluating claims AI deployment, the most useful early investment is an honest assessment of the current environment: document volumes and intake channels, integration points with core systems, compliance requirements as understood by the legal and compliance team, and internal capacity for ongoing AI operations. The answers to these questions determine the correct architecture – and they surface the real constraints before any code is written.