Everyone in software has an opinion on manual testing right now. Half the room says it's dead. The other half says AI tools are overhyped. Both are wrong in interesting ways.

After 20+ years of building and delivering custom software for BFSI, Life Sciences, Oil & Gas, and other complex, regulated, data-intense and legacy-heavy industries we've watched QA, especially manual testing, go through several "death announcements" forecast by tools, frameworks, and now AI. And we're still writing test cases by hand sometimes. Here's why that's not a contradiction.

The "Manual Testing Is Dead" argument has a fatal flaw

The argument usually goes: AI can generate test cases, LLMs can read specs and produce coverage, tools like Playwright with AI copilots can write e2e tests faster than any human. Why pay for manual QA?

The flaw is that this argument assumes clean, well-documented, greenfield systems – where specs exist, codebases are consistent, and behavior is predictable.

Most production software isn't that.

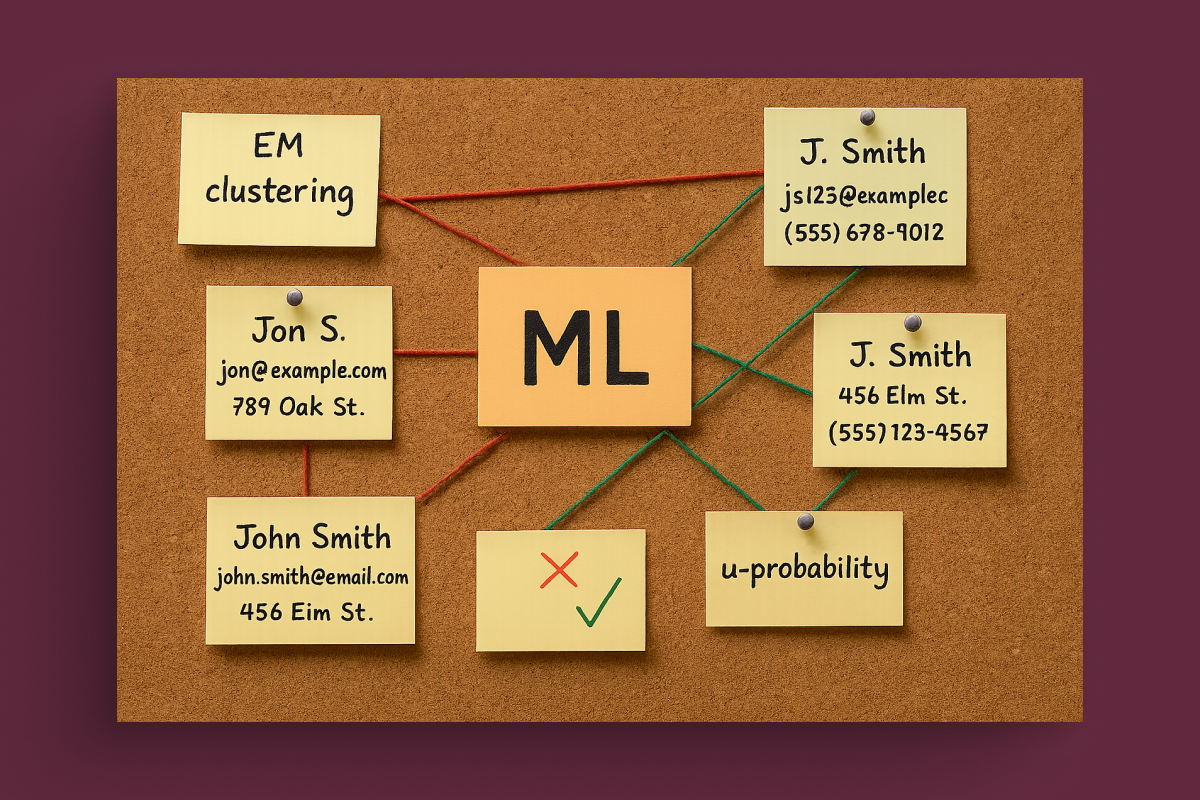

In our work with legacy financial systems and document management platforms with 15-year-old codebases, the most critical test cases are the ones no spec ever described: the edge cases that exist because of undocumented business logic, regulatory workarounds, and years of accumulated tribal knowledge. No LLM generates those test cases. A QA engineer who has spent three months with the system does.

Manual testing isn't dead. Its scope has changed.

What AI tools for QA are actually good

Let's be direct about where the tooling genuinely helps:

Test generation from code and specs. Tools like GitHub Copilot, Testim, and increasingly purpose-built agents can generate unit and integration test scaffolding fast. If your system is well-structured and your requirements are documented, this is a genuine 3-5x productivity multiplier.

Visual regression testing. AI-assisted tools (Percy, Applitools) detect UI drift in ways that pixel-by-pixel comparison never could. For front-end-heavy products with frequent deploys, this is transformative.

Test maintenance. Flaky tests are QA's silent productivity killer. AI-assisted maintenance that detects selector drift or reclassifies false positives is real, unglamorous value.

Exploratory pattern recognition. Some tools can analyze production logs and suggest untested paths. For high-traffic systems, this kind of coverage gap analysis used to take weeks manually.

Where AI QA tools still struggle: regulated environments with strict audit trail requirements, complex stateful workflows (multi-step financial transactions, document approval chains), and systems where the "correct" behavior is legally defined rather than technically defined. In these contexts, a model that confidently generates test cases can create false confidence – tests that pass but miss compliance-critical scenarios.

TaaS: real model or buzzword?

Testing as a Service has been around in various forms for over a decade – what's new is the economic model it's being pitched with now.

The promise: pay per test execution, elastic capacity, no fixed QA headcount, faster release cycles.

The reality is more nuanced.

TaaS works well when:

- Your product is stable enough that test suites don't need constant rethinking

- Quality requirements are standardized enough to transfer to an external team

- You're optimizing for throughput (running more tests faster), not coverage depth

TaaS underdelivers when:

- Domain knowledge is a prerequisite for knowing what to test – not just how

- Regulated industries require QA engineers who understand compliance requirements, not just test scripts

- You're dealing with a new product where requirements are still being discovered in production

The pattern we see repeatedly: companies adopt TaaS for regression testing (good fit), then try to extend it to full QA ownership (poor fit), and end up with a production incident caused by something a domain-aware tester would have flagged.

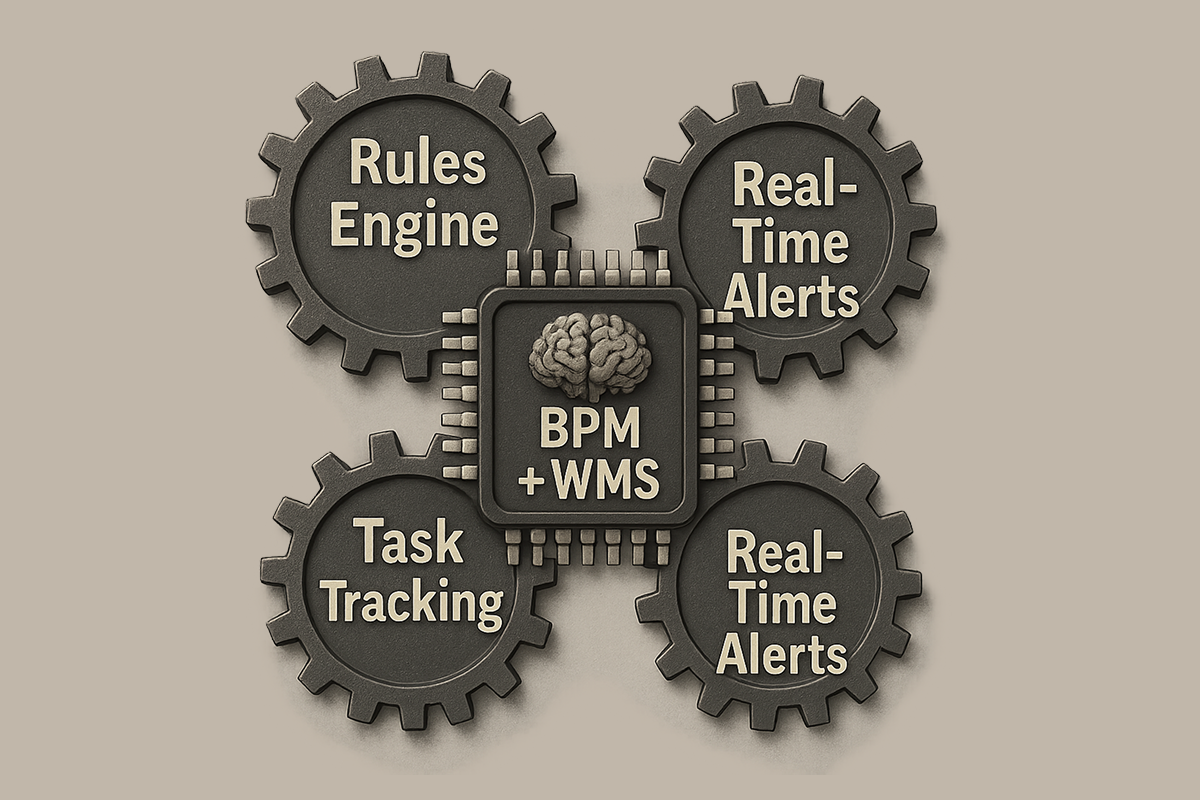

The question no one asks: who owns quality after go-live?

This is where most QA conversations stop too early.

The testing debate focuses almost entirely on the pre-release phase: automated vs. manual, AI-generated vs. handwritten, cheap vs. thorough. But production quality is an ongoing responsibility, not a pre-deployment checkbox.

In industries like insurance, banking or oil & gas, a bug that makes it to production doesn't just cost reputation – it can mean regulatory penalties, operational shutdowns, or financial reconciliation nightmares. The question isn't just "how do we test before release?" It's "who monitors quality behavior in production, who investigates anomalies, and who owns the feedback loop back into the test suite?"

This is why we've consistently found that the teams who get the most value from QA investment – whether it is manual, automated, or AI-assisted testing – are the ones who treat testing as an ongoing engineering responsibility rather than a gate at the end of a sprint.

What this means in practice for custom development teams

If you're working with a custom software development partner, here are the questions worth asking:

1. Can your QA team explain why a test case exists, not just that it exists? AI-generated test suites can hit coverage metrics without covering what matters. Domain-aware QA can explain the business risk behind each scenario.

2. Who handles production anomalies that don't match existing test cases? This gap between "all tests pass" and "the system behaves correctly in production" is where most post-launch quality failures live.

3. How does your QA process adapt to regulatory changes? In the Fintech and public sector, requirements aren't frozen. A QA approach that doesn't include ongoing compliance review is a ticking clock.

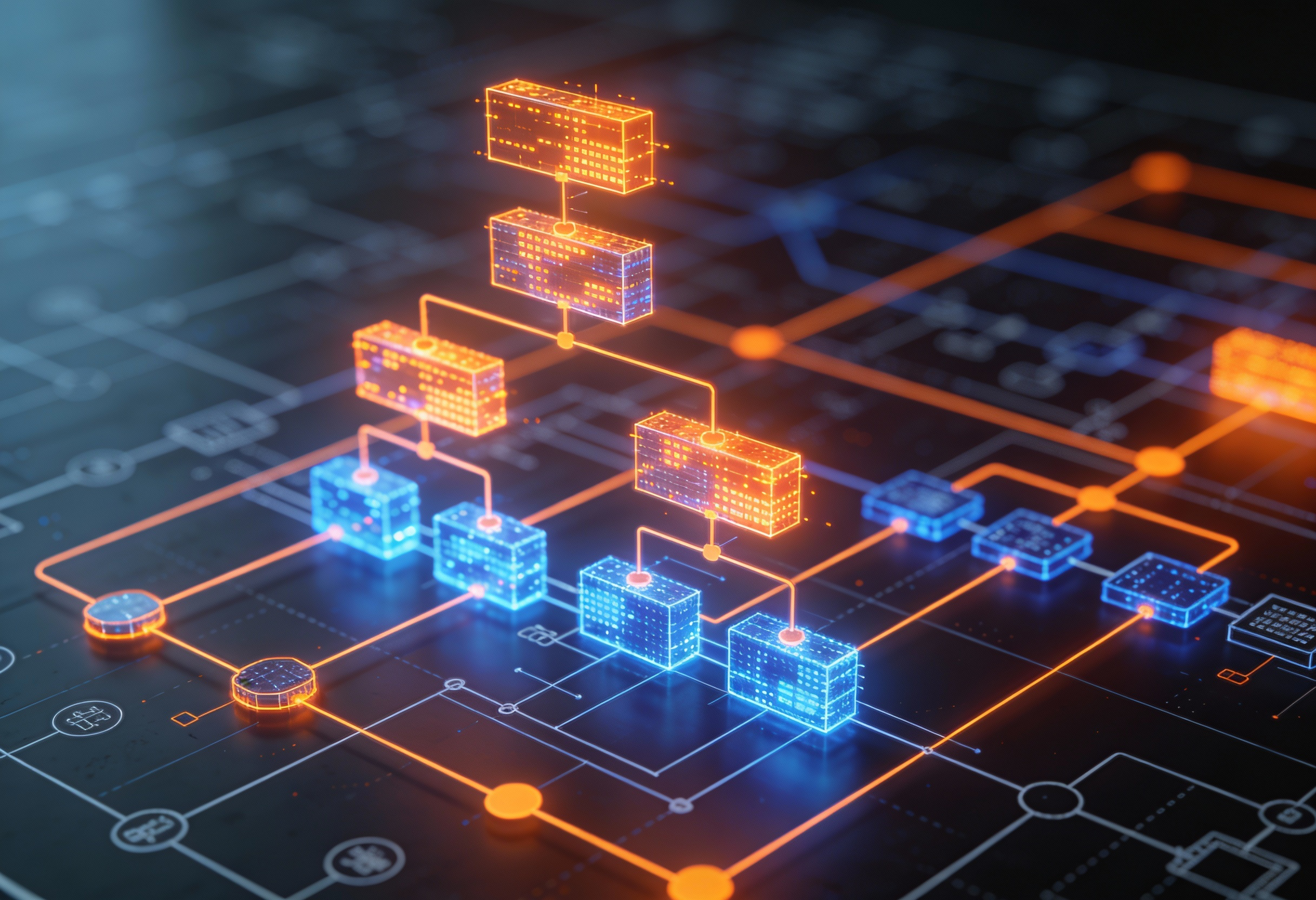

4. Are you testing the integration, not just the components? AI tools are particularly good at component-level testing. System-level, cross-domain integration testing (especially in data-intensive environments) still requires deliberate engineering judgment.

To sum up

Manual testing isn't dead – it's specializing. The routine, repetitive, high-volume regression work should absolutely be automated and increasingly AI-assisted. But the high-judgment work, such as understanding what failure looks like in a specific business context, testing compliance behavior, investigating production anomalies in complex legacy systems, is becoming more valuable, not less.

TaaS makes sense as a delivery model for specific, bounded QA tasks. It doesn't replace embedded QA expertise in teams working on complex, regulated, or evolving systems.

And AI testing tools are real productivity multipliers with real limitations. The teams that benefit most are the ones who apply them to the right problems and stay skeptical about what they're actually covering.

Quality isn't a phase. It's an ownership stance.

Azati has been building and maintaining production software in data-heavy industries such as BFSI, EOG for over 20 years. If you're dealing with QA challenges in legacy systems, regulated environments, or post-AI-generated codebases – we're happy to compare notes.