I've had a version of the same conversation probably thirty times in the last year.

It starts with someone telling me they want to use AI for engineering documentation. Search, retrieval, maybe extraction from drawings. Big volumes. Years of backlog. The usual picture.

We talk for a while. I ask questions. And somewhere around the forty-minute mark, sometimes earlier, the conversation quietly changes direction. Not because anyone decides to change the subject. More like the real question finally comes to the surface.

"Can your system tell us whether this drawing has been stamped with the right revision before someone uses it on site?"

"We need to know when a procedure references a document that's been superseded. Automatically. Before the engineer finds out the hard way."

"Everything has to be traceable. Our regulator doesn't accept 'the AI said so."

At that point I understand we've stopped talking about document management. We've started talking about something else entirely.

The Gap Nobody Warned Them About

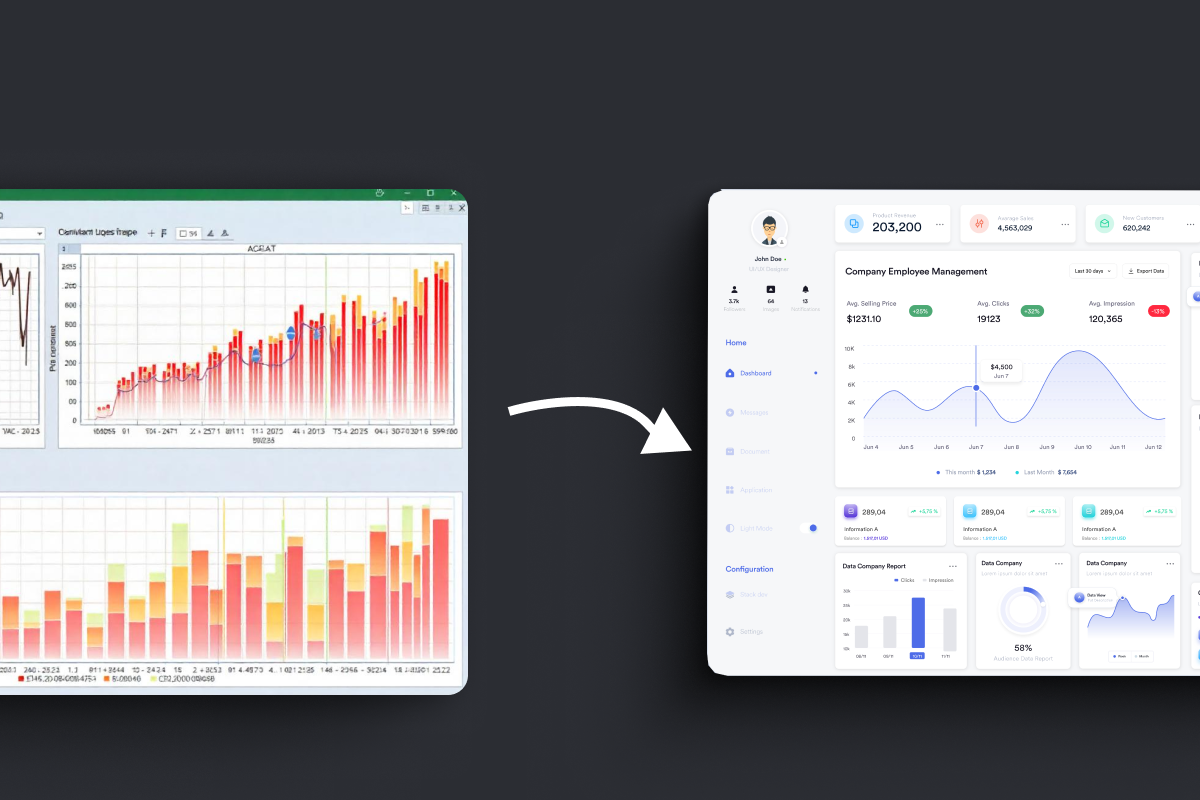

Here's the thing about document AI as it's mostly sold today: it solves the problem of finding things.

And that is a real problem. Engineering teams in large infrastructure projects can spend up to 20% of their working hours just locating, cross-checking, and reconciling information scattered across PDFs, contractor submissions, and legacy drawing sets. Getting that time back matters.

But finding the document is step one. What most operators actually need is steps two through five.

Is this the current revision? Does it match what's in the asset register? Has anyone flagged a change to the referenced specification since this drawing was approved? Who still needs to sign off, and can I show an auditor exactly where that approval is documented?

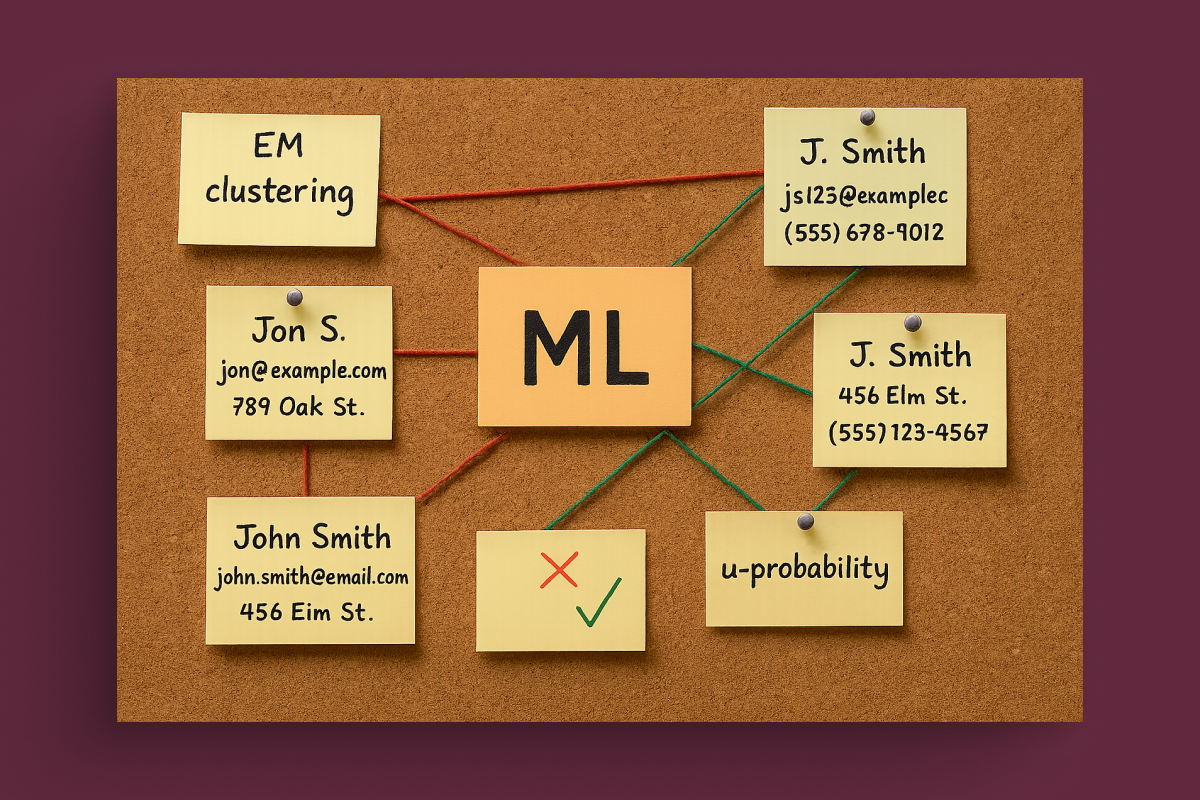

These are not search problems. I am not a technical person, I don't design software systems. But I've talked to enough engineers and project managers to understand that these questions require something fundamentally different from an index. They require the system to understand that documents are connected to other documents, that revisions have histories, and that some outputs need to be locked to a source before a human can act on them.

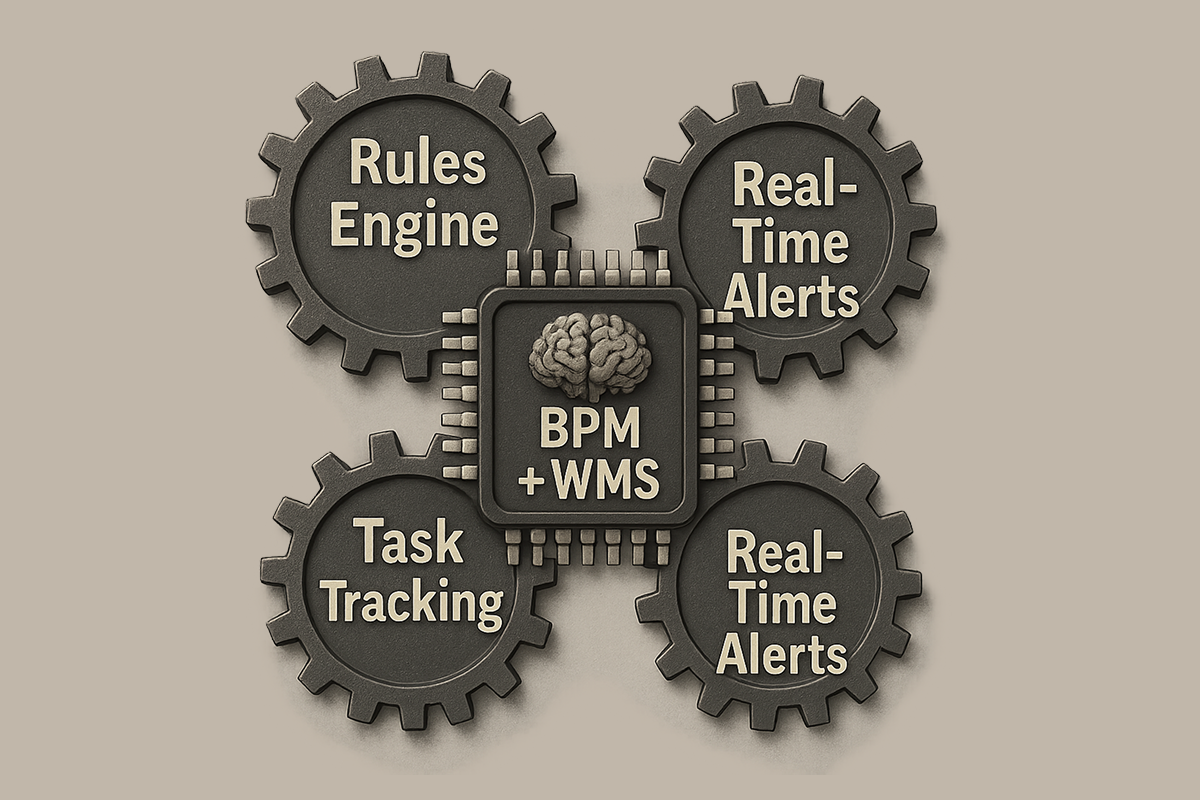

Most document AI tools don't do that, not because they're poorly built, but because they were built to solve a different problem. Revision control, cross-document consistency, rule-based validation require a different class of tool. Call it engineering intelligence if you want a label, but the practical meaning is simple: AI that understands how engineering documentation works, not just what's written inside it.

What I Kept Hearing Across Industries

Let me describe a few conversations from the last twelve months, without naming the organizations, the point is the pattern.

Specialty Chemicals: P&ID Validation at Scale

A specialty chemicals manufacturer in Asia works with P&ID drawings, process and instrumentation diagrams that define how production systems are wired together. Every product change triggers P&ID updates.

They told me that validating a single updated drawing takes between eight and sixteen man-days. Manually. Checking tags, verifying that flow directions are still correct after a change, confirming that safety logic hasn't been accidentally broken somewhere upstream. Fifty to a hundred of these updates happen every six months.

What they wanted from AI wasn't search. They wanted automated diff-checking between revisions, rule-based validation that asks: does this drawing still comply with HAZOP requirements? Is the PSV logic consistent? Did anyone accidentally remove a safety element during the last revision?

Airport Infrastructure: System Topology and Downstream Impact

An airport infrastructure team had critical system logic spread across thousands of engineering drawings, with no unified view of how systems depend on each other. During maintenance or a failure, engineers manually trace which drawings are affected, a process that creates delays in exactly the moments when speed matters most.

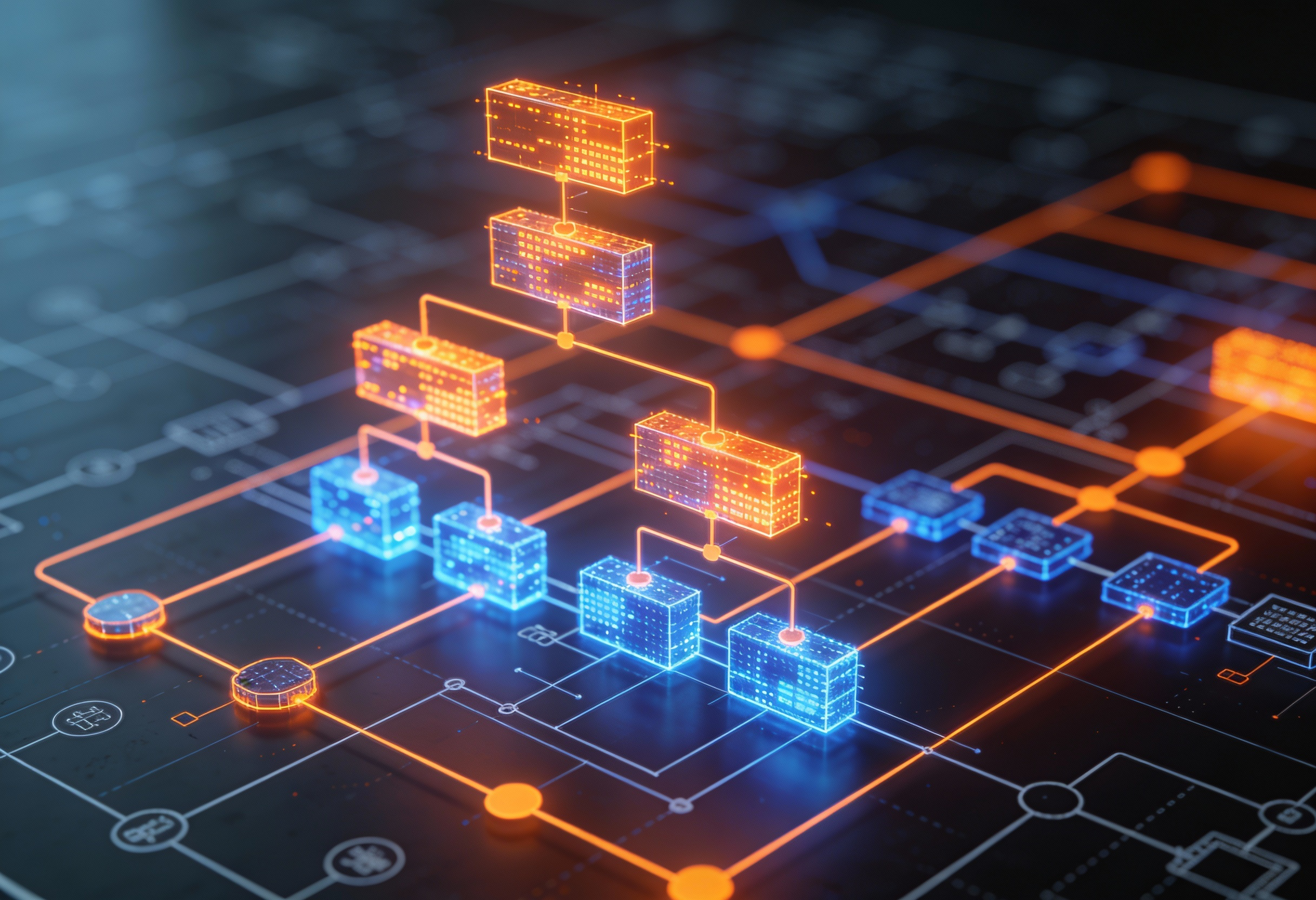

What they described was a topology problem: AI that understands not just what's in a drawing, but how systems connect across drawings, and what the downstream consequences of a change might be.

A search tool tells you nothing. A system that holds a relationship model across the full drawing set can start to answer it.

A related question came up in that conversation, one I've heard in other forms since: how confident is the AI in what it found? In environments where an engineer needs to make a decision based on extracted data, "the system found a match" is not enough. What they need is a confidence indicator: this tag was extracted with high certainty, this one needs human review before you act on it.

Power Infrastructure: The Governance Question

A power infrastructure project with regulatory oversight. The director of engineering, not the IT person, the engineering director, said something I've been thinking about since:

He wasn't describing a search problem. He was describing a governance and traceability problem. And it's the problem most AI vendors are simply not set up to answer.

What a Safety-Critical RFP Made Explicit

All of these conversations stayed with me. But it was a procurement document I encountered more recently that brought the pattern into the sharpest focus.

I won't name the sector or the client. What I will say is that it was a formal RFP for an AI proof of concept in a highly regulated, safety-critical operational environment. The scope requirements were precise in a way I hadn't seen before in an AI procurement document.

The required capabilities included:

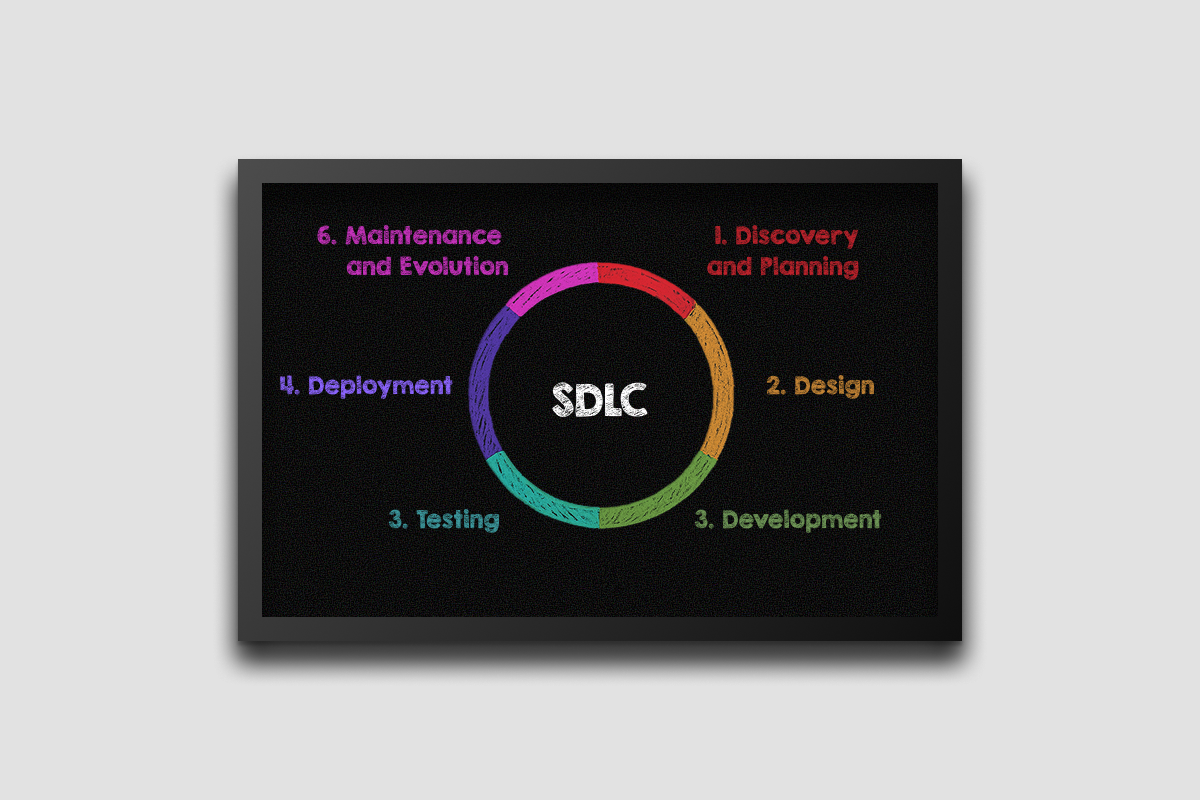

- Automated comparison of controlled document revisions

- AI-assisted interpretation of engineering drawings and schematics

- Procedural quality assurance support

- Full audit trails

- Mandatory human review and approval for every AI-generated output before it could be used in a workflow (stated as a hard requirement, not a preference)

The language throughout was specific: traceability, auditability, bounded scope, human oversight. The document was essentially setting conditions, not aspirations. We will consider AI for these workflows, but only if it operates inside our control structure, not alongside it, and not after the fact.

What struck me was not the technical requirements themselves. It was what they revealed about the buyer's experience. This is language that comes from organizations which have already thought carefully about what happens when an AI-assisted output is wrong. They've concluded that the answer is not better AI, but better-governed AI.

That is a very different conversation than "can you help us search our documents faster.

Why These Environments Are a Leading Signal, Not an Edge Case

It would be tempting to read the chemicals manufacturer's pain or the safety-critical RFP as niche problems, too specific, too regulated, doesn't transfer to the mainstream.

That reading is mistaken.

The most demanding environments produce the clearest requirements. When the consequences of a documentation error are high enough, organizations stop accepting ambiguity in what they ask from their tools. According to IAEA safety standards, document control and traceability are baseline requirements for nuclear and critical infrastructure, and the same logic is increasingly applied across energy, chemicals, and transport sectors.

Every serious procurement conversation in regulated infrastructure eventually arrives at the same things: traceable outputs, revision-aware processing, human-controlled workflows, documented AI scope. Sometimes it's stated as a hard requirement. Sometimes it surfaces forty minutes into a demo conversation. But it always arrives.

Why better AI doesn't automatically mean the right AI

There's a real difference between AI that reads documents and AI that understands the relationships between them.

A document can look perfectly fine in isolation. The problem appears when you put it next to the three other documents it references, and one of them changed last month and nobody updated the cross-reference.

Or when the equipment tag on a P&ID doesn't match the tag in the asset register because two contractors used slightly different numbering conventions. Or when revision D of a controlled procedure removed a safety check that revision C included, and nobody flagged it because the documents were reviewed in isolation.

A search engine, even a very good one, finds things inside documents. It doesn't tell you that two documents are now inconsistent with each other. It doesn't tell you which other systems are affected when one drawing changes. It doesn't tell you how confident it is in what it extracted, or which outputs need human review before anyone acts on them. To do any of that, the system needs to hold a model of how documents connect and how much to trust what it found. And that is a different kind of tool.

From where I sit, in commercial conversations, this is the distinction which determines whether AI actually gets deployed into live engineering workflows or stays in the innovation lab.

The Governance Requirements Are Not the Obstacle, They're the Specification

One thing worth pushing back on: the framing that regulated environments are slow to adopt AI because of bureaucracy or risk aversion.

That's not what the evidence shows. Operators in these industries understand exactly what's at stake if an AI-assisted output is wrong in a compliance submission, a handover package, or a safety-critical procedure.

The European Union's AI Act, which categorizes AI systems in high-risk domains (including critical infrastructure), explicitly requires human oversight, auditability, and traceability, the same requirements these operators have been asking for on their own.

The requirements I keep encountering in serious procurement processes are very specific: human review before any AI output enters a controlled workflow, full audit trails, outputs traceable to specific sources and revisions, AI scope explicitly bounded and documented.

These aren't compliance theatre. They're the minimum conditions under which a serious organization can actually deploy AI into engineering execution, not pilot it, deploy it.

The vendors who treat these requirements as obstacles are the ones who don't understand the market they're selling into.

Four Questions Worth Asking Any Vendor

After enough of these conversations, from the chemicals manufacturer counting man-days on P&ID validation, to the airport team tracing system dependencies, to the safety-critical RFP setting explicit governance conditions, a clear set of diagnostic questions has emerged.

1. Does every AI output trace back to a specific source, not just "a document", but a specific revision and element within it?

If the answer is "we provide source references at the document level", that's not enough for a governed engineering workflow.

2. Does the system understand revision history, or does it treat every version as a separate file?

In environments where document revisions carry legal and operational weight, P&ID updates in chemicals manufacturing, controlled procedures in safety-critical operations, this is the difference between a useful tool and a new way to create the same old confusion.

3. Is human review structurally built into the workflow, not optional, not dependent on the user remembering, but required before any AI output is acted upon?

If the answer is "users can choose to review before acting", that's not governance. That's hoping people don't skip a step under deadline pressure.

4. Can you show an auditor exactly where AI was involved and where human judgment took over?

In regulated environments, the answer to "what did the AI decide and what did the engineer verify?" needs to be available and clear, not eventually, but from the beginning.

Where This Is Heading

The infrastructure market has already moved past the question of whether AI for engineering documentation can deliver value. That discussion is over. The answer is yes.

The question being asked now is different: which class of AI, operating under which conditions, can actually be trusted inside live engineering and compliance workflows?

The specialty chemicals manufacturer counting validation man-days, the airport team tracing system topology, the power infrastructure director asking about provenance, the safety-critical operator setting explicit governance conditions, they're all asking the same question in different languages.

The organizations which will deploy AI successfully in regulated infrastructure are not the ones chasing the most capable model. They're the ones asking whether the model can operate safely inside their control structure.

That's a harder question. It's also the right one.

Want to explore what governed AI looks like in practice? Azati's Managed AI offering is built around exactly this challenge: AI systems that are operated, monitored, and governed in production, not handed off after the pilot. Talk to our team.