A few weeks ago I came across a LinkedIn post from a VP of Engineering. He wasn't talking about layoffs or AI replacing developers. He was announcing something more interesting: two structural changes to his engineering organisation.

First: he was merging Data Engineering and Data Science into a single function – "Fullstack Data & AI Engineers." One role. One person owning the full data lifecycle, from pipeline to model to deployment.

Second: he was consolidating two separate support tiers into a unified SRE team focused on reliability, proactive system management, and using AI to reduce manual work.

His framing stuck with me: "Engineering, unified. Consolidating roles for clarity, speed, and ownership."

And then he said something I think deserves more attention: "For me, this is just the beginning. The way we define roles is changing, and teams that adapt early will have a real advantage." He's right. And this is something I've been thinking about for a while – because we're living it at Azati.

The job description of a programmer is being rewritten

Not by AI replacing engineers. By AI expanding what a single engineer can credibly own.

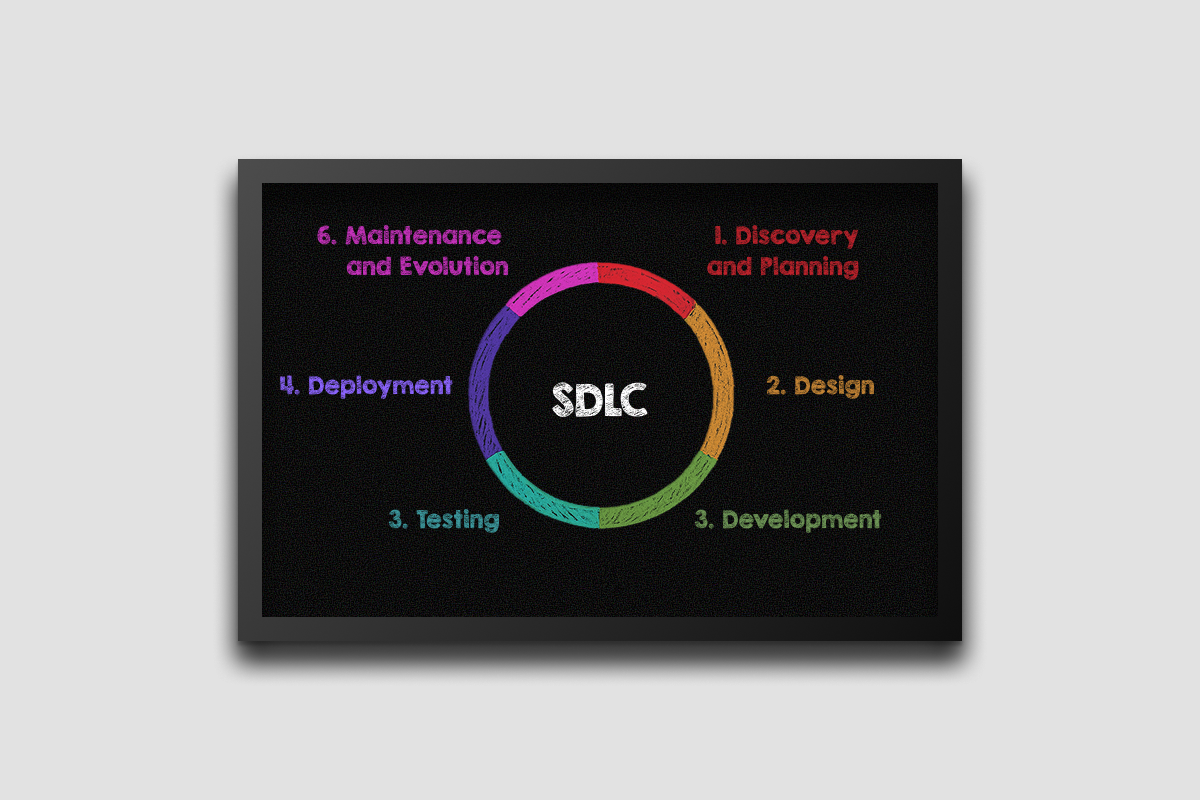

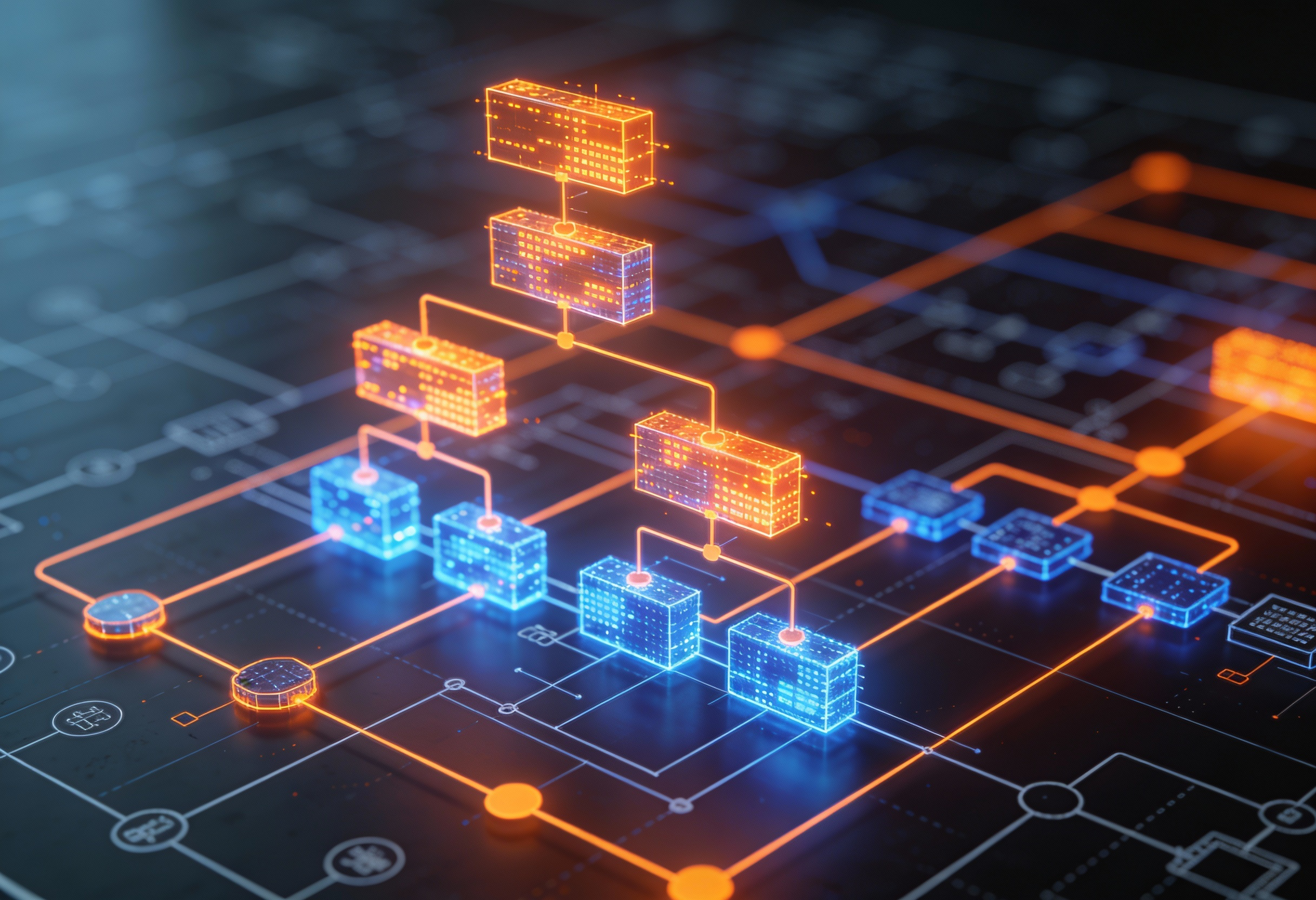

For two decades, the specialisation of software roles was a rational response to complexity. Codebases grew too large, surface areas too broad, for any one person to hold it all in their head. So we divided the work up: frontend here, backend there, data engineer over there, QA in another room. We built organisations around those divisions and wrote job descriptions that carefully carved out each lane.

That division of labour made sense when the cost of crossing boundaries was high – when understanding an unfamiliar layer required weeks of ramp-up, when context-switching between systems was genuinely expensive.

AI changes that cost structure fundamentally.

A well-equipped engineer with the right AI toolchain can now scaffold a backend service, understand an unfamiliar data model, write and run tests, review her own code for edge cases, and debug across layers – without waiting for a specialist at every handoff. Demand is rapidly growing for specialists who create, operate, and supervise AI tools, while people performing repetitive, automatable tasks must reskill or expand their competencies.

The job is not disappearing. It's getting bigger.

This isn't about "vibe coding"

Let me draw a distinction I think matters.

There's a version of AI-assisted development that's essentially autocomplete at scale – paste a prompt, get some code, ship it without understanding it. That approach produces fast demos and slow disasters. It's not what I'm describing.

What's actually changing at the engineering level is something more disciplined: AI as a force multiplier on genuine expertise. An engineer who deeply understands data systems can now operate at the speed of someone with three extra specialists beside her. An engineer who understands distributed systems can prototype, instrument, and deploy in timeframes that used to require a whole team.

Instead of just rendering views, engineers are now responsible for how applications think, respond, and scale in real time. AI-first development environments are redefining how code is written, reviewed, and shipped – turning engineers into high-leverage architects.

The keyword is leverage. Not replacement. Not simplification. Leverage.

The best engineers I know right now are not the ones who use AI the most. They're the ones who use it most intelligently – knowing when to trust it, when to override it, and how to direct it toward the hard parts of a problem instead of the easy ones.

What role consolidation actually means in practice

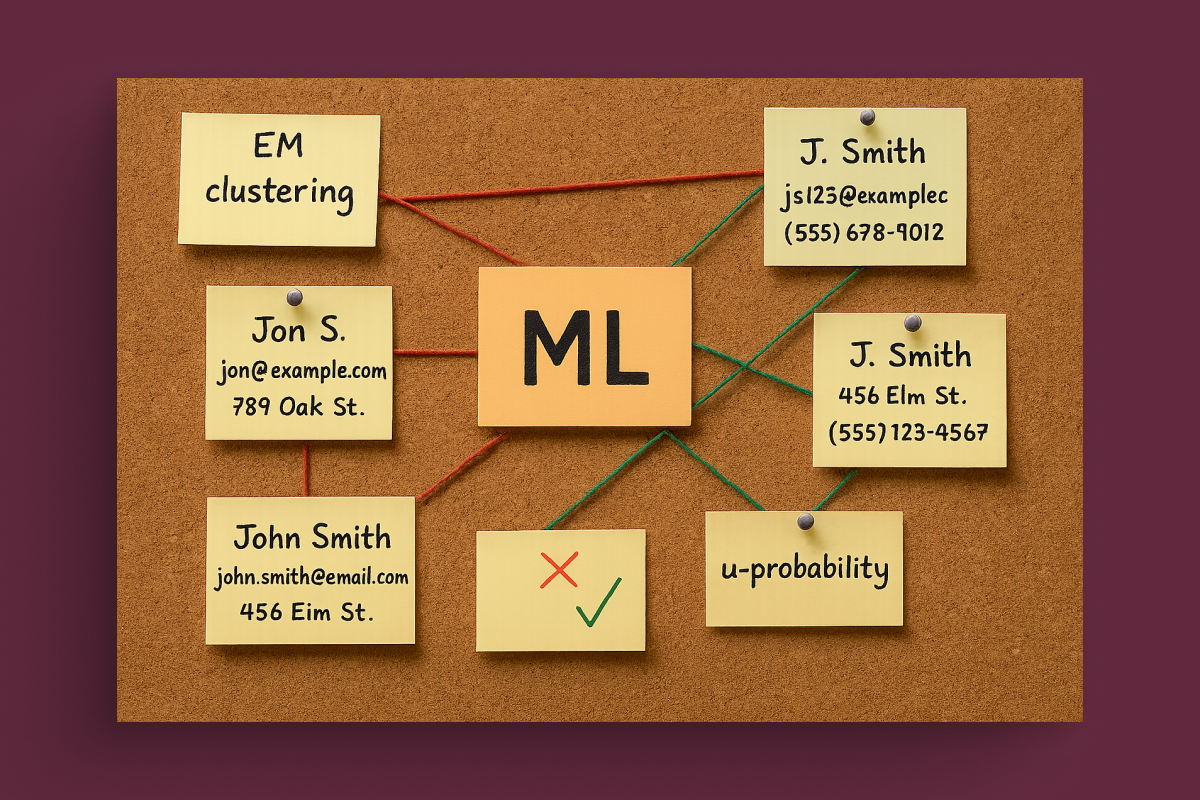

When that VP described merging Data Engineers and Data Scientists into a single role, the natural pushback is: doesn't that produce someone who does two jobs at half the quality?

Only if you're thinking about it in terms of the old job descriptions.

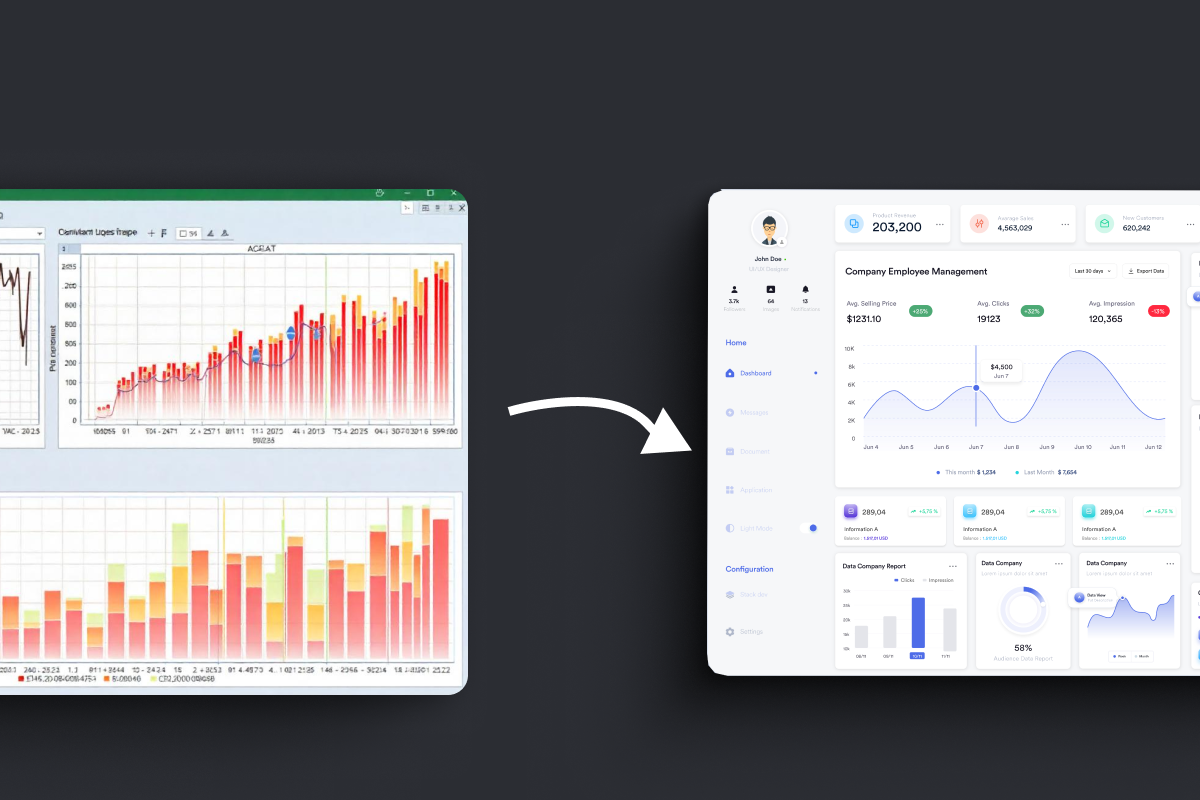

The real shift is in the ownership model. Previously, building a data product required handing off between two specialists – one who understood the pipeline, one who understood the model. The handoff was a delay. It was also a diffusion of accountability: when something breaks, whose problem is it?

A Fullstack Data & AI Engineer owns the whole outcome. They built the pipeline and the model. They know where the integration risk lives. They can debug end-to-end without assembling a committee. AI tools handle the translation work between layers that used to require the second specialist.

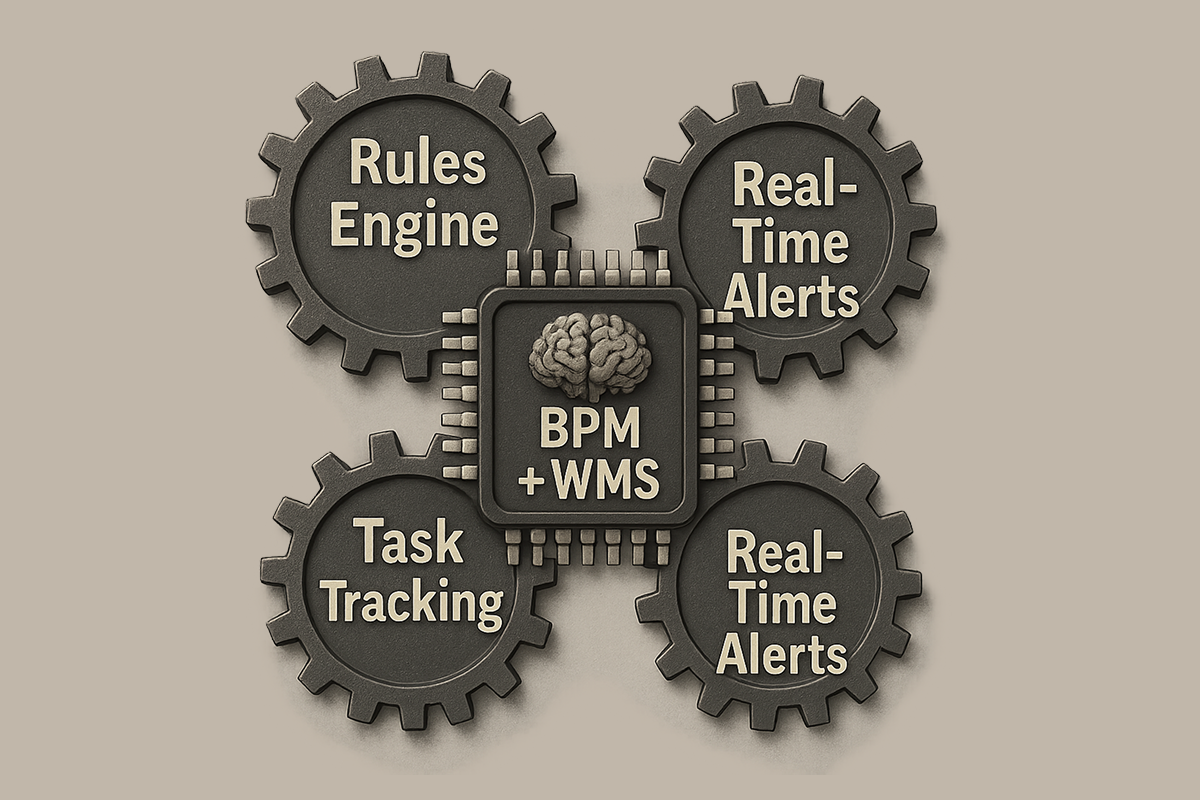

The same logic applies to the SRE consolidation. Two separate support tiers – one handling escalations, one handling infrastructure reliability – become a single team with a unified mandate: keep the system healthy, respond fast, use AI to reduce the manual work. Fewer handoffs. Clearer ownership. Better outcomes.

This pattern is showing up everywhere:

- Platform engineers absorbing infrastructure responsibilities that used to belong to separate DevOps and cloud teams

- Backend engineers building and owning their own observability pipelines

- Security shifting from a separate function to a responsibility embedded in every engineering team

- QA moving from manual testing to engineers who own automated quality from the start

The architecture of the engineering organisation is catching up to what AI makes possible.

What we're doing at Azati

We've been going through our own version of this – and to be clear, AI isn't a sudden pivot for us.

Seven years ago we shipped Digatex, our first serious AI-powered product. Three years ago we started ACOP – an ambitious internal system to run the company on AI rails, half real product, half forcing function for our own capability. So when I write about the engineer expanding, I'm not theorising. We've been inside this transition long enough to know what it actually requires.

What's changing now is depth and scope. Our strategic vector is explicitly AI-First – meaning we expect neural networks woven into every process, not bolted on as a layer. In practice that breaks down into three directions:

- AI Everywhere. AI integrated into the day-to-day work of every function, engineering and otherwise. Coding assistants are the obvious case. Less obvious: marketing, HR, and operational workflows where automation finally removes the friction that used to consume our most expensive hours.

- AI-Driven Solutions. The architectural shift in what we build for clients. AI as the core logic of a product, not a feature layered on top. Agentic systems. Autonomous components inside larger applications. Designs where the model isn't an add-on – it's the spine.

- Deep ML & Infrastructure. The unglamorous work that separates serious shops from demo shops. Model fine-tuning. Computer vision. Custom architectures for specific domains. The part that doesn't show up in a screenshot but determines whether the thing holds up under real production load.

We work primarily in complex, regulated domains – financial services, insurance, industrial automation. Our clients don't buy cheap engineering. They buy engineering they can trust to perform in production under real conditions. That's always meant a certain kind of rigour about how we hire and how we structure teams.

What's changed is what we expect from a single engineer on a project. We've moved away from strict role definitions toward what I'd call ownership radius – how much of a system a person can confidently understand, build, and take responsibility for. AI tools have expanded that radius significantly for our best engineers.

Concretely: we staff AI-augmented teams where engineers are expected to work across layers rather than within them. We've invested in shared AI workflows – not just coding assistants, but tooling for architecture review, documentation, test generation, and deployment verification. We evaluate engineering output not by velocity metrics but by the quality and durability of what ships.

This is harder to build than a traditional specialist team. It requires different hiring criteria, different onboarding, a different culture around accountability. But the results for clients are different too.

Why this matters for the people commissioning software

I want to be specific here, because this often gets misframed.

The value of AI-augmented, broader-ownership engineering is not that it makes software cheaper to build. Anyone selling you on that is either naive or not telling you the full story – the real cost savings from AI tend to get re-invested in quality, in speed, in doing things that were previously too expensive to even attempt.

The value is different:

- Speed to production. When one engineer owns a feature end-to-end – database to interface, infrastructure to observability – there are no handoff delays. No queue between the person who built the backend and the person debugging the frontend. Features that took six weeks in a traditional specialist model take two when one person holds the whole picture.

- Fewer integration failures. A significant share of production bugs aren't logic errors inside a layer – they're failures at the boundary between layers. When one engineer owns both sides of that boundary, that class of problem largely disappears.

- Accountability you can actually act on. "Talk to the backend team" is not accountability. One person who built and owns a system end-to-end is accountable in a way that distributed teams simply cannot be.

- Faster response when things go wrong. The engineer who built it knows where to look. There's no reconstruction of context, no "which team owns this service?" The consolidated SRE model exists precisely for this – unified ownership means faster incident response.

None of this is an argument for building with fewer people. It's an argument for building with people whose scope of ownership matches the scope of the problem – and whose AI tooling lets them operate at that scope without burning out or cutting corners.

The harder question

I'll close with something I think about more than the tooling.

The biggest change AI is forcing in engineering organisations is not technical – it's cultural. The specialist identity runs deep. People have built careers around being the person who knows a particular layer, a particular technology, a particular system. That identity is genuinely threatened by a world where AI can bring a capable engineer up to working competence in an unfamiliar layer within hours rather than months.

The engineers who will thrive in the next decade are not the ones who guard their specialisation. They're the ones who use their specialisation as a foundation for going broader – who treat AI as the tool that lets their deep expertise extend further than it could before.

The VP who restructured his data and SRE teams understood something important: the organisational model should serve the outcome, not the other way around. If the job content of an engineer is changing – and it is – then the job title, the team structure, and the ownership model need to change with it.

We're head to toe in this. The consolidation is real, but it's not finished. What I'm confident about is that the teams who work this out early – who figure out how to get genuine leverage from AI without losing the rigour and depth that makes engineering actually work – will have a structural advantage that compounds over time.